Every engineering organization has a pipeline that nobody puts on an org chart. No budget line. No Jira project. No mention in quarterly planning. But it is arguably the most important process in the company: the pipeline that turns junior engineers into senior ones.

That pipeline is breaking. Not because junior engineers are less talented or because computer science programs have gotten worse. It is breaking because the tool we gave junior engineers to make them more productive is simultaneously making them less capable of learning the things that would make them genuinely good.

The tool is AI.

The employment numbers are already moving

Before the skill formation problem, look at the hiring data. It is worse than most people realize.

A Stanford Digital Economy Lab study, led by Erik Brynjolfsson and published in August 2025, analyzed high-frequency payroll records from millions of American workers via ADP. Employment for software developers aged 22 to 25 declined nearly 20% compared to its peak in late 2022. A 13% relative decline in employment for early-career workers in the most AI-exposed jobs since the widespread adoption of generative AI tools.

This is not a broad downturn hitting all demographics equally. The same study found that employment rates for workers aged 30 and over in high AI-exposure fields grew between 6% and 12% from late 2022 to May 2025. Older, more experienced developers are doing fine. The entry-level cohort is the one shrinking.

The Stanford researchers' interpretation: AI is particularly effective at replacing textbook knowledge -- coding syntax, basic algorithms, foundational patterns taught in computer science programs. These are precisely the skills junior developers rely on when they start working. The entry-level tasks that used to justify hiring a junior developer can now be done by an AI at a fraction of the cost.

A separate Harvard study, led by Seyed M. Hosseini and Guy Lichtinger, tracked employment data across 285,000 U.S. firms and 62 million workers from 2015 to 2025. At firms with high AI adoption, junior employment declined by roughly 8 percent relative to non-adopters within six quarters of implementation. Senior employment remained largely unchanged.

The decline was not driven by layoffs. It was driven by non-hiring. Companies stopped backfilling junior positions when people left. They slowed the conversion of interns to full-time offers. They raised the experience bar for new hires. The junior developer role did not get eliminated -- it got quietly deprioritized.

In wholesale and retail trade sectors, the decline was even steeper: 40% fewer junior hires after AI adoption.

The skill formation problem

Fewer junior hires is a problem. But the deeper problem is what is happening to the junior developers who do get hired.

Anthropic -- the company that makes Claude -- published a randomized controlled trial in early 2026 on exactly this question. Researchers Judy Hanwen Shen and Alex Tamkin recruited 52 software engineers, mostly junior, who regularly used Python but were unfamiliar with Trio, a library for asynchronous programming. Half got access to an AI coding assistant. Half did not.

The AI group completed the task in roughly the same time as the control group but scored 17% lower on a follow-up comprehension quiz. Not on some abstract measure -- on a quiz about the specific concepts they had just used minutes before.

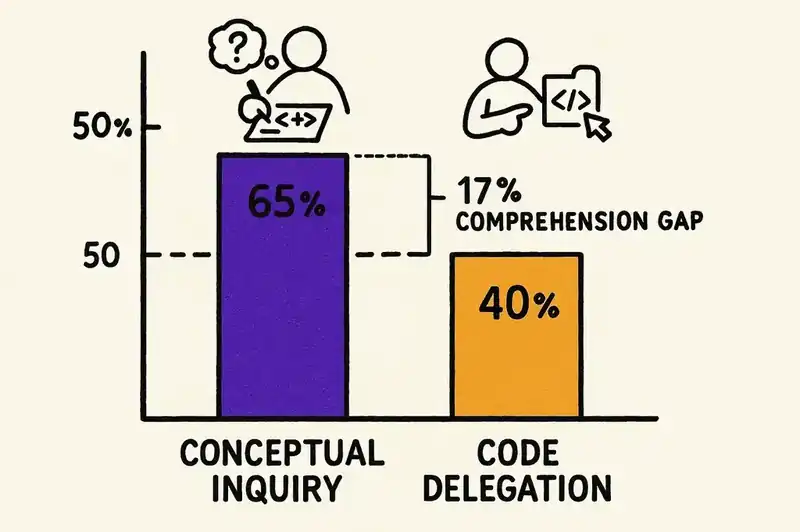

The gap was more dramatic when the researchers looked at how participants used the AI. Those who used it for conceptual inquiry -- asking it to explain concepts, then writing the code themselves -- scored 65% or higher on comprehension. Those who delegated code generation -- letting the AI write the code while they focused on integrating the output -- scored below 40%.

The difference between 65% and 40% is the difference between understanding the tool you are using and operating it by rote. One group was learning. The other was assembling.

Addy Osmani, in his March 2026 piece on comprehension debt, cited the Anthropic study directly. "The largest declines occurred in debugging," he wrote, "with smaller but still significant drops in conceptual understanding and code reading." Debugging, conceptual understanding, and code reading are not peripheral skills. They are the core competencies that distinguish a senior engineer from a junior one.

The deskilling paradox

What Anthropic measured in a lab, organizational researchers have observed in the field. It has a name: the deskilling paradox.

The concept is not new. Sociologists and organizational theorists have studied deskilling in the context of automation since the 1970s. Harry Braverman's "Labor and Monopoly Capital" documented how industrial automation reduced skilled trades to machine tending. The pattern is consistent: when a tool does the skilled part of the work, the human's role narrows to the unskilled parts -- feeding inputs, monitoring outputs, handling exceptions. Over time, the human loses the skill that the tool replaced, because they never practice it.

In software engineering, the skilled part of the work is not typing code. It is understanding systems -- holding a mental model of how components interact, reading code and knowing what it does without running it, debugging by forming hypotheses and testing them, making architectural decisions that account for trade-offs you can only understand through experience.

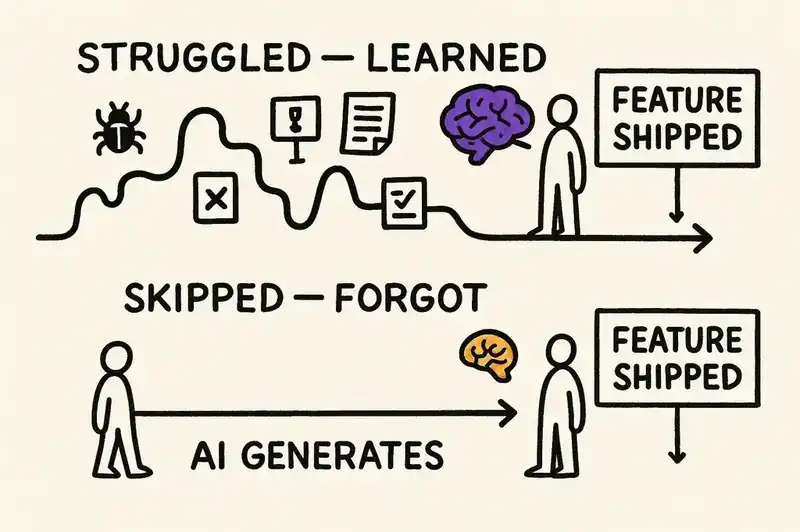

When a junior developer uses an AI to write code, they skip the part where they struggle with the problem. That struggle is not waste. It is the learning process. The bugs they would have introduced and then fixed. The wrong approaches they would have tried and abandoned. The documentation they would have read to understand why the library works the way it does. All of that is compressed into a single act: prompting the AI and accepting the output.

The output is often correct. The developer ships the feature. Sprint velocity looks good. The manager is happy. But the developer did not learn anything. They moved a ticket from "in progress" to "done" without building the mental model that would let them do similar work without the AI. Next time they face a similar problem, they will need the AI again.

This is not theoretical. Microsoft Research and Carnegie Mellon University surveyed 319 knowledge workers using generative AI in 2025 and found that workers reported tasks felt cognitively easier, but also reported ceding problem-solving to the system. They described focusing on functional tasks -- gathering and integrating responses -- rather than developing the analytical capabilities that define expertise in their domains.

The debugging gap

The skill that separates a capable engineer from a code assembler is debugging.

Debugging requires everything that AI-assisted code generation bypasses: a mental model of the system, an understanding of how components interact, the ability to form and test hypotheses, the patience to trace a problem through multiple layers of abstraction. You cannot debug a system you do not understand. You cannot understand a system you did not build, or at least carefully study.

The Anthropic study found the largest comprehension drops in debugging skills specifically. This tracks with how AI coding tools are used. When the AI writes the code and the developer integrates it, the developer has a surface-level understanding of what the code does. They know the inputs and outputs. They may understand the general approach. But they do not have the deep familiarity that comes from having written each line, having considered and rejected alternatives, having hit dead ends and backtracked.

When that code breaks in production -- and it will break, because all code breaks eventually -- the developer who assembled it from AI output is in a worse position than the developer who wrote it by hand. They lack the mental model, the intuition about where failures might originate, the understanding of why the code was written this way rather than some other way. They are starting the debugging process from scratch, in a codebase they recognize but do not understand.

Debugging takes years to develop under normal circumstances. AI is not just failing to accelerate that development. It is actively interfering with it.

The mentorship vacuum

The skill pipeline does not just depend on individual practice. It depends on mentorship -- the transfer of knowledge and judgment from experienced practitioners to newer ones. That is breaking down too.

As we documented in our analysis of the review bottleneck, senior engineers in AI-adopting organizations are increasingly consumed by code review. The Faros AI data shows a 91% increase in review time on high-AI-adoption teams. The Tilburg University study shows core developers reviewing 6.5% more code while their own productivity drops 19%.

When senior engineers are drowning in review, they do not have time to mentor. The code review itself is a poor substitute for mentorship, because AI-generated PRs are not the teaching moments that human-written PRs are. When a junior developer writes code, the senior reviewer can ask: "Why did you approach it this way?" The ensuing conversation is a learning opportunity. When AI writes code and the junior developer submits it, the senior reviewer is not teaching. They are auditing.

The METR study documented this dynamic. Experienced developers spent their AI-assisted time reviewing, testing, and rejecting output rather than doing the creative and architectural work that defines their expertise. That architectural work -- the system-level thinking, the design trade-off analysis, the "here is why we do it this way" conversations -- is exactly what junior developers need exposure to in order to grow.

What learning actually requires

Educational psychologist Robert Bjork at UCLA developed the concept of "desirable difficulty": learning is most effective when it involves a certain level of struggle. Tasks that are too easy do not produce durable learning. The effort of retrieving information, working through confusion, and making mistakes is not an obstacle to learning -- it is the mechanism.

AI coding assistants eliminate desirable difficulty. The struggle of figuring out how a library works, the frustration of a bug you cannot find, the tedium of reading documentation -- all bypassed when you can ask the AI and get an answer in seconds. Faster task completion in the short term. Weaker understanding in the long term.

The Anthropic study illustrates this directly. Both groups completed the task in roughly the same time. But the AI group, spared the struggle, scored 17% lower on comprehension. Same destination, less learned along the way.

That 17% gap compounds across hundreds of tasks over a junior developer's first two years. Each time the AI writes the code, the developer skips the struggle that would have built their understanding. The end result is a developer who can prompt AI effectively but cannot reason about systems independently.

The pipeline implications

Pull back and look at this from an organizational perspective.

Junior developer hiring is down 20% from peak. The juniors who do get hired are using AI in ways that reduce their comprehension by 17%. At firms with high AI adoption, junior employment declines roughly 8% within six quarters. Senior engineers are too busy reviewing AI-generated code to mentor the juniors who remain.

Where do your senior engineers come from in five years?

Under current conditions, they do not. Or rather, they come from a much smaller pool, having developed their skills more slowly, with less mentorship, and with a weaker foundation of system-level understanding.

This is not a hiring problem or a training problem. The system that produces experienced engineers -- challenging work, struggle, mentorship, code review feedback, debugging practice, gradual assumption of responsibility -- is being disrupted at every stage.

The spec as a learning scaffold

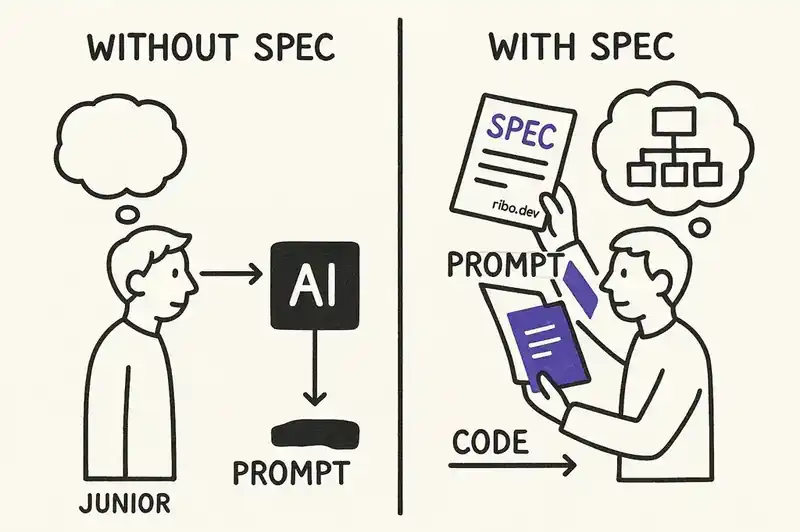

There is a way to use AI that does not destroy the learning pipeline. It requires separating what a system should do from how to implement it.

A specification defines the what: interfaces, constraints, behaviors, edge cases, architectural decisions. It is the blueprint that encodes reasoning that would otherwise live only in a senior engineer's head.

When a junior developer works from a spec, they are not reverse-engineering code to understand the system. They are reading a human-authored document that explains the design and the reasoning behind it. The spec transfers architectural knowledge -- the hardest and most valuable kind -- without requiring the senior engineer to be in the room.

The junior developer can then use AI to help with the implementation -- the how -- while the spec provides the scaffolding for understanding the why. This is closer to how the successful participants in the Anthropic study used AI: for conceptual inquiry and implementation assistance, not wholesale delegation of thinking.

The spec also provides something to verify against. When the junior developer's AI-assisted code does not match the spec, that mismatch is itself a learning opportunity. "The spec says this endpoint should return a 404 when the resource is not found, but your implementation returns a 500. Why?" That question leads to understanding. It is the kind of question that builds the debugging and systems-thinking skills that the Anthropic study found AI was eroding.

The urgency is real

We are in the early stages of a skill pipeline disruption that will take years to fully show up. The junior developers entering the workforce today, learning to code with AI from day one, will be the mid-level engineers of 2028 and the senior engineers of 2031. If their foundational skills are weaker -- and the evidence says they are -- the effects will compound across the entire industry.

The Stanford data shows the hiring pipeline is constricting. The Anthropic data shows the learning pipeline is degrading. The Harvard data shows firms are systematically reducing their investment in early-career talent. The senior engineers who would normally compensate for these gaps are consumed by the review burden that the same AI tools created.

This is not an argument against AI. AI coding tools are powerful and they are here to stay. This is an argument for being intentional about how we integrate them, particularly for junior developers whose long-term capability depends on the learning experiences they have now.

Give them the spec. Let them understand the architecture. Let them struggle with the implementation. Use AI as a teaching aid, not a thinking replacement. Protect the mentorship time that turns junior developers into senior ones.

The alternative is an industry that can generate code at unprecedented speed but cannot produce the engineers who understand it.