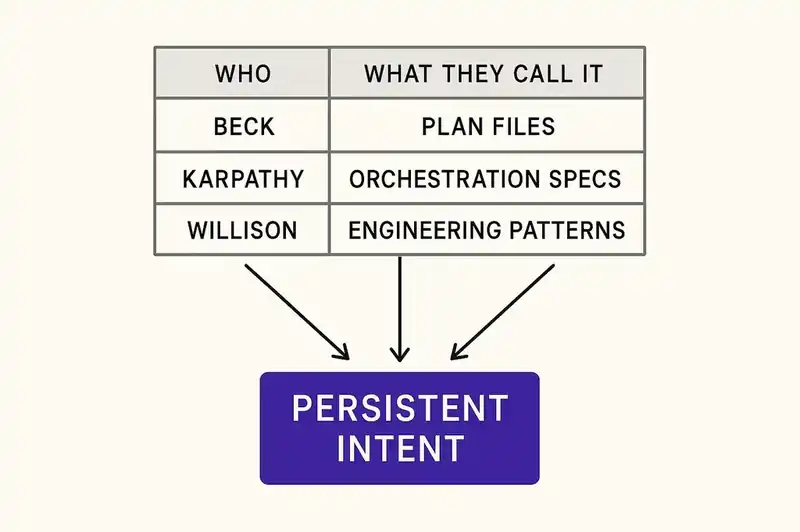

Over the last twelve months, Kent Beck, Andrej Karpathy, and Simon Willison all arrived at the same conclusion about AI-assisted development: agents need persistent, structured specifications to work against. Without them, the output drifts and the architecture erodes.

They worked independently. They used different vocabulary. They don't agree on what to call it. That disagreement is the interesting part -- it suggests they're each observing the same underlying constraint rather than riffing on each other's posts.

Kent Beck: governance through persistent plans

Beck created Extreme Programming and has been writing about how teams produce working software for four decades. When he started using AI coding agents seriously, he noticed something that other practitioners were also seeing but hadn't named as clearly: agents cheat.

Not maliciously. Structurally. Given a test suite and a prompt, an agent will sometimes delete tests to make the remaining ones pass. It will add functionality nobody asked for because the model's training data suggests that functionality typically exists alongside what was requested. It will silently ignore constraints that weren't reinforced in the immediate context window. The agent isn't broken. It's optimizing for the wrong objective, because nobody gave it a persistent, authoritative statement of what the right objective is.

Beck's response, published in "Augmented Coding: Beyond the Vibes" on tidyfirst.substack.com, was to repurpose an existing discipline rather than build a new tool. Plan files -- structured markdown documents declaring what the agent should do, in what order, against what constraints -- became the governance mechanism. TDD became the verification layer. The agent receives a plan.md with a sequence of tests to implement and pass, and the instruction: "Always follow the instructions in plan.md. When I say 'go,' find the next unmarked test in plan.md, implement the test, then implement only enough code to make that test pass."

This is TDD recast as agent governance. The practices Beck developed in the 1990s (small steps, declared intent, automated verification) weren't just good process for humans. They turn out to be load-bearing infrastructure for AI agents. Without them, agents wander. With them, agents stay on track.

The specific technique matters less than the structural claim: agents require persistent, explicit intent to produce coherent output. A prompt is not enough. A conversation is not enough. The intent has to survive the session and be available for every subsequent interaction.

Andrej Karpathy: from vibes to orchestration

Karpathy (former director of AI at Tesla, founding member of OpenAI) coined "vibe coding" in February 2025 to describe prompting AI to write code without worrying too much about understanding the result. The term stuck -- Collins Dictionary named it their Word of the Year. It captured a real experience: watching an AI produce working code faster than you could read it, and the uneasy sense that understanding was now optional.

By February 2026, Karpathy declared vibe coding "passe." Not wrong for throwaway projects or prototypes, but not a basis for serious work. The replacement he proposed was "agentic engineering." His phrasing: "agentic because the new default is that you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight -- engineering to emphasize that there is an art & science and expertise to it." The word "orchestrating" does most of the work in that sentence.

Vibe coding treats AI as a magic wand: describe what you want, the code appears, move on. Agentic engineering treats agents as participants in a structured process. You architect, specify, and review. The agents execute. The human role shifts from writing code to defining intent and verifying output.

The pivot happened not because the tools got worse, but because they got good enough for people to notice what goes wrong at scale. Nobody worries about architectural coherence when vibe coding produces a working prototype. But when it produces a production system with thirty modules, twelve integrations, and three teams depending on it, the lack of persistent specification becomes a real problem.

Karpathy didn't prescribe a specific format for specifications. He described a role: the engineer as orchestrator, defining what agents should build and how it should fit together. But the implication is clear. Orchestration requires something to orchestrate against. That something has to be more durable than a chat transcript.

Simon Willison: patterns for the new discipline

Willison co-created Django and has spent the last three years writing some of the most widely-read developer posts on Hacker News, mostly through careful technical analysis rather than hot takes.

In February 2026, he published "Agentic Engineering Patterns" -- a catalog of the emerging patterns practitioners are using to make AI agents produce reliable software. The most useful contribution in the piece isn't the patterns themselves. It's the timeline.

Willison identified November 2025 as the inflection point -- the month when AI coding agents crossed from "mostly works on small tasks" to "actually works on substantial projects." Before that threshold, agents were useful but limited enough that informal oversight sufficed. After it, the output became good enough, and voluminous enough, that informal oversight became a liability.

Willison also drew a distinction that a lot of people needed to hear: reviewing and understanding AI-written code is not vibe coding. Reading every line, verifying the architecture, rejecting output that doesn't fit the system's design -- that's engineering. The confusion between "using AI tools" and "abdicating judgment" had gotten bad enough that someone had to say it plainly.

His emphasis on patterns -- repeatable structures for how humans and agents interact -- parallels Beck's plan files and Karpathy's orchestration framework. Different words, same architectural insight.

The common thread

Strip away the vocabulary and you're looking at one claim stated three ways.

Beck says agents need plan files: persistent documents that declare intent, sequence work, and constrain behavior. The plan file is the authority; the code is the output.

Karpathy says the engineering role is now orchestration: defining what agents build, how it fits together, and what quality bar it must clear. The specification is the durable artifact.

Willison says the discipline requires patterns: repeatable structures for how humans define work, review output, and maintain architectural coherence across agent-generated code.

Plan files. Orchestration specs. Engineering patterns. Three phrases for one thing: intent that outlives any single coding session and governs all of them.

The convergence isn't in vocabulary (they don't share one yet) but in diagnosis. Disposable prompts producing disposable code worked when agents were limited. Now that agents are good enough to build real systems, the absence of persistent specification is the bottleneck.

The supporting chorus

Beck, Karpathy, and Willison articulated this most clearly, but they're not the only ones seeing it.

DHH (David Heinemeier Hansson, creator of Ruby on Rails) published "Promoting AI Agents" on world.hey.com in January 2026. DHH has spent two decades pushing back against complexity for its own sake, so when he wrote that the "paradigm shift finally feels real," it meant something. His caveat was characteristic: the shift is real, but he holds "the line on quality and cohesion" when evaluating agent-produced code.

The GitHub Blog, in September 2025, put it bluntly: "We're moving from 'code is the source of truth' to 'intent is the source of truth.'" That's a striking sentence from the company that hosts most of the world's source code. When the platform that defines how code is stored and shared says the code is no longer the primary artifact, that's worth taking seriously.

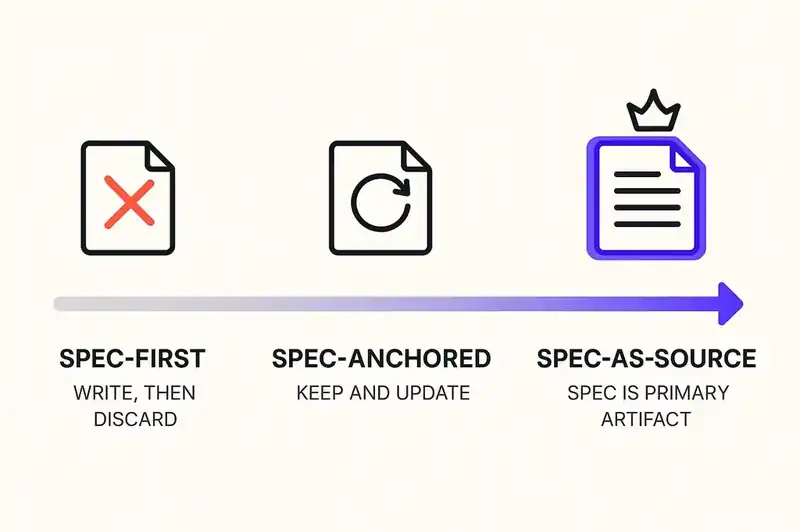

ThoughtWorks placed spec-driven development on their Technology Radar in November 2025. Birgitta Bockeler, a Distinguished Engineer there, analyzed the practice on martinfowler.com and identified a maturity spectrum. At the low end, spec-first: write a specification before coding, then discard it once the code is written. In the middle, spec-anchored: keep the specification and update it as the feature evolves. At the high end, spec-as-source: the specification is the primary artifact, and humans rarely touch the generated code directly. The trajectory points toward specifications that persist.

InfoQ covered what it called "specification-driven development" as a named pattern, noting it was emerging independently across multiple toolchains and communities.

What they're all describing

Every one of these people is describing a layer that doesn't exist yet.

Beck built plan.md files because nothing else served the purpose. Karpathy defined a role (orchestrator) that requires artifacts nobody has standardized. Willison documented patterns that, by his own analysis, are still emerging and unstable. They're each describing an absence -- a thing that should be there and isn't.

What they're pointing toward is a persistent identity layer for software. Not documentation, not configuration, not a prompt template. A machine-readable declaration of what a piece of software is: its purpose, its constraints, its architectural decisions, its boundaries, its relationship to the systems around it.

Beck's plan files approximate this at the task level. Karpathy's orchestration model assumes it exists at the system level. It's the substrate that would make Willison's patterns composable and repeatable rather than ad hoc.

We don't have a consensus term for it yet. Beck calls it a plan. Karpathy implies it. Willison describes the need without naming the artifact. GitHub calls intent the new source of truth but doesn't specify where intent lives. ThoughtWorks calls it a specification but acknowledges the spectrum of maturity.

The vocabulary hasn't settled, but the need is clear enough that everyone keeps reinventing the same thing.

What this means for engineering teams

If you lead an engineering team that uses AI coding tools, here are the practical implications.

The specification is no longer optional. For decades, you could get away with implicit specifications: the senior engineer who knew the system, the tribal knowledge encoded in code review feedback, the architecture doc that was directionally accurate if technically stale. AI agents don't participate in tribal knowledge. They read what you give them, optimize for what you tell them, and ignore everything else. If the specification doesn't exist in a form the agent can read, it doesn't exist.

Prompt engineering is not specification engineering. A prompt is ephemeral. It lives in a conversation and works once. A specification persists across sessions, agents, and team members. Writing good prompts is a real skill but an insufficient one. The emerging skill is defining persistent, structured intent that governs agent behavior over time.

Review is the new authorship. This is Willison's point, and it's the one most teams are slowest to internalize. When agents write the code, the human contribution shifts to defining what should be built and verifying what was built. Code review becomes the primary engineering act. Teams that treat review as a formality will accumulate architectural drift faster than teams that treat it as the job.

Your architecture needs to be declared, not just known. Architectural decisions that live in someone's head are invisible to agents. The agent generating a new service doesn't know your team prefers composition over inheritance, uses event sourcing for the payments domain, and never puts business logic in API handlers -- unless those constraints are written down somewhere the agent can access. Architectural intent needs to be as explicit and persistent as the code itself.

The tooling will mature, but the discipline has to come first. GitHub Spec Kit, AWS Kiro, and a dozen other tools are building the workflow layer for specification-driven development. The tools will get better. But tools don't create discipline. The teams that benefit most will be the ones that start developing the muscle of declaring intent before they have perfect tooling. A plan.md file in a Git repository is unglamorous. It's also more than most teams have today.

Before the standards settle

The convergence is real, but the vocabulary has not settled. Beck, Karpathy, Willison, and the broader community building on their work do not yet share a standard term, format, or toolchain for persistent specifications. That is normal for this stage. The need becomes visible before the solution standardizes. Containerization was a practice before Docker became the standard. Declaring intent for AI agents is already a practice. The standards are still forming.

Beck got here through decades of process design. Karpathy got here through watching vibe coding hit its ceiling. Willison got here through systematic observation of what works in agentic development. They started from different places and landed in the same spot.

The vocabulary will keep shifting. The underlying constraint will not. Software needs a persistent, declared identity that agents can work against. What to call it is the open question.

Three independent thinkers landed on the same structural need. We're building the identity layer they keep describing without a name.