Your auditor is going to ask three questions. Who wrote this code? Against what specification was it written? How did you verify that the code matches the specification?

If the answer to the first question is "an AI agent," the second and third questions become significantly harder. And if you cannot answer them, you have a compliance problem that no amount of retroactive documentation will fix.

This is not hypothetical. The EU AI Act's requirements for high-risk AI systems become enforceable on August 2, 2026. The Colorado AI Act takes effect on June 30, 2026. Both impose documentation, governance, and audit requirements on organizations that use AI in consequential decisions. "Consequential decisions" is broader than most engineering teams realize -- credit scoring, hiring systems, insurance underwriting, healthcare triage, and any system where algorithmic output materially affects a person's access to services, employment, or resources.

If your organization operates in fintech, healthcare, insurance, or any regulated industry, the clock is running.

What the regulations actually require

The EU AI Act

The EU AI Act classifies AI systems into risk categories. High-risk systems -- the ones subject to the strictest requirements -- include AI used in critical infrastructure, education, employment, essential services, law enforcement, and migration. If your software makes or influences decisions in any of these domains, it is likely classified as high-risk.

For high-risk systems, the Act requires:

A quality management system -- not a QA team, but a documented system covering the entire lifecycle: design, development, testing, deployment, monitoring, and decommission. It must include procedures for data management, risk management, and change management. It must be auditable.

Technical documentation before deployment: the system's intended purpose, architecture, training data, performance metrics, and known limitations. This documentation must stay current.

Conformity assessment before deployment -- an evaluation that the system meets the Act's requirements. For some categories this is self-assessed; for others it requires a third-party notified body.

Post-market monitoring: providers must monitor performance, document incidents, and report serious incidents to authorities.

Record keeping: logs sufficient to reconstruct the system's behavior. For AI-generated code, this means tracing what the agent produced, what context it had, and what review process it went through.

The penalties for non-compliance are severe: up to 35 million euros or 7% of global annual turnover for the most serious violations, up to 15 million euros or 3% for non-compliance with high-risk obligations. The European AI Office can request documentation, conduct evaluations, and demand source code access.

The Colorado AI Act

The Colorado AI Act (SB 24-205) focuses on algorithmic discrimination. It requires developers and deployers of high-risk AI systems to:

Impact assessments before deployment: organizations must assess the risk of algorithmic discrimination -- unlawful differential treatment or disparate impact based on protected characteristics including race, color, age, disability, religion, sex, and veteran status.

Risk management: policies and procedures to manage the risks identified in the impact assessment, covering governance, data management, and ongoing monitoring.

Consumer notification: when an AI system influences a consequential decision about a consumer, the consumer must be told AI was involved and given an opportunity to appeal.

Documentation: organizations must document their AI systems, risk assessments, mitigation measures, and monitoring practices in a form producible for the Colorado Attorney General.

Enforcement is through the Colorado Attorney General's office, with violations treated as consumer protection offenses subject to civil penalties up to $20,000 per violation. A safe harbor provision reduces liability for organizations that can demonstrate compliance with recognized standards like ISO/IEC 42001 or alignment with the NIST AI Risk Management Framework.

The audit framework

With those requirements as context, the audit framework has five layers, each building on the one before it.

Layer 1: Provenance tracking

The foundation of any audit is attribution. You need to know, for every piece of code in production, who or what produced it and when.

What to implement:

- Tag every commit with its authorship source: human, AI-assisted (human reviewed), or AI-generated (agent produced). Your git tooling can enforce this through commit hooks or CI checks.

- For AI-generated code, record the agent that produced it, the model version, and the prompt or task description that triggered the generation. This does not need to be in the commit message itself -- a linked artifact store works -- but it needs to be traceable from the commit.

- For AI-assisted code (where a human used Copilot or similar), define a threshold. If the developer substantially modified the output, it can reasonably be attributed to the human. If the developer accepted the output with minimal changes, it should be attributed to the AI. Your organization needs a consistent policy here, documented and enforced.

- Maintain a provenance log that maps every production deployment to the commits it contains, and every commit to its authorship source. This is the chain of custody that auditors will follow.

What auditors will ask:

"Show me the authorship breakdown of your last three production releases. For the AI-generated components, show me the agent configuration and the task context."

Layer 2: Specification alignment

The second audit question -- "against what specification was it written?" -- is the one most organizations cannot answer today. Not just for AI-generated code. For any code.

This is where most teams fail, and it is where the regulatory pressure is most direct. Both the EU AI Act and the Colorado AI Act require documentation of the system's intended purpose, its design constraints, and its behavioral boundaries. If the code was written against a specification, producing that documentation is straightforward. If the code was "vibed" into existence with no specification, producing that documentation retroactively is somewhere between expensive and impossible.

What to implement:

- Maintain a machine-readable specification for every service or component that operates in a regulated domain. The specification should declare the system's purpose, its inputs and outputs, its data handling requirements, its security constraints, and its compliance obligations.

- Ensure that AI agents have access to the relevant specification before they generate code. This is the critical link that turns "the agent wrote some code" into "the agent wrote code against this specification." Without it, you have generated code with no provenance of intent.

- Version the specification alongside the code. When the specification changes, the code should change. When the code changes, you should be able to trace the change back to either a specification update or an explicit deviation that was reviewed and approved.

- For existing codebases where no specification exists, prioritize creating specifications for high-risk components -- anything that touches PII, financial transactions, access control, or consequential decisions. You do not need to specify everything at once. You need to specify the things that auditors will ask about first.

What auditors will ask:

"Show me the specification for your credit scoring module. Show me that the code implements the specification. Show me the change history of both."

Layer 3: Verification and testing

The third audit question -- "how did you verify it?" -- requires demonstrable evidence that the code does what it claims to do.

What to implement:

- Automated test suites that verify behavior against the specification, not just against themselves. A test that verifies an AI-generated function returns the expected output is useful. A test that verifies the function's behavior matches the specification's declared behavior is auditable.

- Static analysis that checks for compliance-relevant patterns: data handling violations, insecure authentication patterns, hardcoded credentials, unauthorized data access. These checks should run in CI and block deployment on failure.

- AI-specific review checklists for pull requests containing AI-generated code. The checklist should cover architectural consistency (does this code fit the existing patterns?), specification alignment (does it implement what the specification declares?), security properties (does it honor the security constraints?), and duplication (does this functionality already exist?).

- Periodic bias audits for systems that influence consequential decisions. The Colorado AI Act specifically requires assessment for algorithmic discrimination. Automate what you can -- statistical tests for disparate impact across protected characteristics -- and document the results.

What auditors will ask:

"Show me your test coverage for the components that influence credit decisions. Show me the last three bias audit results. Show me a pull request where AI-generated code was rejected and why."

Layer 4: Governance and change management

Regulations do not just require that the right things were done. They require that a system exists to ensure the right things are always done.

What to implement:

- A documented AI governance policy that specifies which AI tools are approved, how they are configured, what review processes apply to their output, and who is accountable for compliance. This policy needs an owner and a review cadence.

- Role-based access controls on AI agent capabilities. Not every agent should be able to modify code in every domain. Agents operating on high-risk components should have explicit authorization, logged and auditable.

- Change management procedures that apply to AI agent configurations, not just to code. When someone changes the rules file that governs how an agent operates on your billing system, that change should go through the same review and approval process as a change to the billing code itself.

- Incident response procedures that account for AI-generated failures. When a production incident is traced to AI-generated code, the response should include not just the code fix but a review of the agent configuration, the specification gap, or the review failure that allowed the defective code to reach production.

What auditors will ask:

"Show me your AI governance policy. Show me the approval chain for changes to agent configurations in your payment processing system. Show me your incident log for the last twelve months and how AI-related incidents were resolved."

Layer 5: Continuous monitoring

Compliance is not a point-in-time assessment. Both regulations require ongoing monitoring and documentation.

What to implement:

- Runtime monitoring that tracks AI agent behavior in production. What actions are agents taking? What data are they accessing? Are their outputs consistent with the specifications?

- Drift detection between specifications and implementations. When the code drifts from the specification -- because of manual changes, agent modifications, or accumulated small deviations -- the drift should be detected and flagged automatically.

- Periodic compliance reviews on a defined cadence (quarterly is a reasonable starting point for high-risk systems). The review should assess whether the governance policy is being followed, whether specifications are current, whether provenance tracking is complete, and whether monitoring is catching the issues it should.

- Documentation that demonstrates continuous compliance, not just compliance at the time of the last audit. Auditors will ask not just "are you compliant today?" but "have you been compliant since the last review?" Continuous monitoring is the evidence that answers the second question.

What auditors will ask:

"Show me your monitoring dashboard for AI agent activity in production. Show me the last specification drift report. Show me evidence that your governance policy has been followed consistently over the last twelve months."

The practical starting point

If you are reading this and thinking "we have none of this," most organizations do not. The regulations are new, the tooling is immature, and the gap is wide.

The prioritized path:

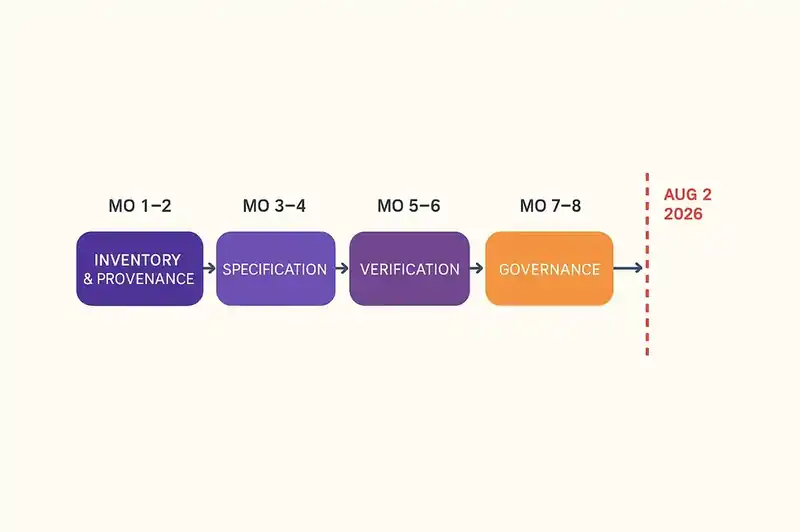

Month 1-2: Inventory and provenance. Identify which of your systems are high-risk under the EU AI Act or the Colorado AI Act. Implement provenance tracking for those systems. Start tagging commits with authorship source. Build the chain of custody from deployment to commit to author.

Month 3-4: Specification for high-risk components. Create machine-readable specifications for your highest-risk components. Start with anything that touches PII, financial transactions, or consequential decisions. Ensure AI agents operating on these components have access to the specifications.

Month 5-6: Verification infrastructure. Implement specification-aligned testing for high-risk components. Add static analysis for compliance-relevant patterns. Create the AI-specific review checklist and start using it.

Month 7-8: Governance framework. Document the AI governance policy. Implement change management for agent configurations. Define roles and responsibilities.

Month 9-10: Monitoring and continuous compliance. Deploy runtime monitoring for AI agent activity. Implement drift detection. Establish the review cadence.

This timeline puts you at compliance readiness by the end of 2026, assuming a start in early Q2. If you start later, compress the timeline accordingly. The EU AI Act deadline of August 2, 2026 is not negotiable.

The ISO and NIST alignment

Both regulations reference recognized standards as evidence of compliance. The Colorado AI Act explicitly provides safe harbor protections for organizations aligned with ISO/IEC 42001 (the AI management system standard) or the NIST AI Risk Management Framework.

If your organization has an existing ISO or NIST compliance program, extending it to cover AI-generated code is more efficient than building from scratch. The frameworks already provide the structure for risk assessment, documentation, monitoring, and continuous improvement. What they lack is the AI-specific content: the provenance tracking, the specification alignment, the agent governance. The five-layer framework above fills those gaps within the existing structure.

If you are pursuing ISO/IEC 42001 certification, the five layers map directly to the standard's requirements: provenance tracking supports Clause 6 (planning) and Clause 8 (operation), specification alignment supports Clause 7 (support) and Clause 8, verification supports Clause 9 (performance evaluation), governance supports Clause 5 (leadership) and Clause 7, and continuous monitoring supports Clause 9 and Clause 10 (improvement).

The cost of waiting

It is tempting to treat compliance as a problem for later -- wait for enforcement, wait for tooling to mature, wait for someone else to go first.

That is a mistake for two reasons.

First, the regulations require historical compliance. When the EU AI Act is enforced in August 2026, auditors will not just ask "are you compliant today?" They will ask about the governance systems, documentation, and monitoring that should have been in place during development. Retroactive compliance is exponentially more expensive than proactive compliance because it requires reconstructing documentation and provenance that should have been captured at the time.

Second, the gap between AI-generated code and auditable code widens every day that provenance is not tracked, specifications are not maintained, and governance is not enforced. Every commit that enters the codebase without provenance is a commit that an auditor cannot trace. Every specification that is not written is context that an agent cannot use. The debt compounds.

The organizations best positioned when enforcement begins will be the ones that treated AI-generated code as auditable code from the start -- because auditability is a prerequisite for quality, security, and maintainability regardless of regulatory pressure.

Your auditor will ask who wrote the code, against what specification, and how you verified it. Start having answers now.