You're starting an engineering organization from scratch. Blank slate. No legacy processes, no inherited org chart, no "we've always done it this way." You know, going in, that AI agents will write somewhere around half the code your team ships. You know that percentage is going up, not down. You know the agents are fast, cheap, and have no idea what your company does.

What org do you build?

Not the one you'd retrofit. The org you'd design if you were being honest about what software development actually looks like right now.

We keep having this conversation with engineering leaders, and the answers are consistent: almost nobody would build the org they currently have.

The 41% inflection point

GitClear's analysis of 211 million changed lines of code found that 41% of code written globally in 2024 was AI-generated. That's not a forecast. That's a measurement. And it was taken before the latest generation of coding agents hit mainstream adoption.

When nearly half the code in your codebase wasn't written by a human on your team, the job descriptions you wrote in 2022 are describing work that no longer exists in its original form. The senior engineer who spent 60% of their time writing code and 40% reviewing it is now spending 20% writing code, 30% reviewing AI output, and the remaining 50% on... what, exactly?

That "what, exactly" is where the org design question lives. Because the work didn't disappear. It shifted. And the shift has a pattern to it.

Three role transformations that are already happening

Developer becomes orchestrator. The core skill of a software developer has historically been translating business requirements into working code. That translation is increasingly handled by agents. What remains is decomposing ambiguous problems into well-scoped tasks that agents can execute, then stitching the results into a coherent system. That's the actual hard part now.

This isn't a demotion. It's a different skill. An orchestrator needs to understand the problem space deeply enough to know when an agent's output is subtly wrong, even when it compiles and passes tests. They need taste and system-level thinking. But the day-to-day looks different: less typing, more specifying; less implementing, more evaluating.

Kent Beck put it plainly: "The value of 90% of my skills just dropped to $0. The leverage for the remaining 10% went up 1000x." He expanded on this at the Craft conference in Budapest: "Some of what we used to do has suddenly become easy, which increases the leverage of the other things that we do -- things like setting goals, project management, and breaking down projects into milestones."

The 10% that went up 1000x is orchestration: seeing a system whole, knowing what to ask for, knowing when the answer is wrong despite looking right.

Reviewer becomes intent validator. Traditional code review asks: is this code correct? Is it clean? Does it follow our patterns? Those are the wrong questions when the code was generated by an agent that produces syntactically perfect, well-structured, thoroughly wrong implementations.

The right question for AI-generated code is: does this implementation match the intent? Not "is the code good" but "is this what we actually wanted." That requires the reviewer to have access to a clear statement of intent, not just a Jira ticket title, but a real specification of what the change should accomplish and what constraints it should respect.

Most teams don't have this. Most reviews of AI-generated code are a senior engineer eyeballing a diff and asking "does this look right?" That's verification theater when the diffs are large, syntactically clean, and internally consistent. The reviewer's pattern-matching brain says "this looks like good code" and hits approve. Meanwhile, the implementation might solve a slightly different problem than intended, or solve the right problem in a way that violates an architectural constraint the agent didn't know about.

Intent validation requires explicit intent. You can't validate against something that doesn't exist as an artifact.

Architect becomes specification engineer. This is the transformation with the most leverage. In the human-only org, architecture was often an oral tradition. The architect drew diagrams, gave talks, reviewed PRs, and carried the system design in their head. The team absorbed the architecture through osmosis: pairing sessions, design reviews, lunch conversations.

Agents don't eat lunch. They don't attend design reviews. They have no osmosis. Every architectural decision that lives only in a human's head is invisible to the agent, which means the agent will violate it, which means the reviewer has to catch it, which means the reviewer needs to remember it. At scale, this breaks.

The specification engineer's job is to make the implicit explicit. Take the architectural decisions, the system constraints, the domain rules, the integration contracts (everything that used to live in the team's collective memory) and encode it in persistent, machine-readable artifacts that agents can reference and humans can validate against.

This isn't writing documentation. Documentation is for humans and it drifts. Specifications are for machines and they have to be precise. The specification engineer writes the constraints that agents operate within. A wiki page and an architecture diagram don't do this job.

The platform team problem

This is where the org design question gets hard.

Gartner predicts that 80% of large software engineering organizations will have dedicated platform engineering teams by the end of 2026, up from 45% in 2022. The logic is sound: as systems get more complex and developer experience becomes a competitive advantage, you need a team whose job is to make other teams productive.

But the economics are brutal for small and mid-size companies. DX's Q1 2026 benchmarks show that organizations allocate 2-6% of total engineering headcount to developer productivity, averaging 4.7%. That translates to roughly one platform engineer per 17-50 developers. For a 20-person engineering team, that's one person. Maybe two. You can't build an internal developer platform with two people. You can barely maintain one.

Even at scale, the results are mixed. The State of Platform Engineering Report found that while 45.5% of organizations have dedicated, budgeted platform teams, most remain reactive rather than strategic. Only 36.6% have dedicated platform product managers. Nearly 30% of platform teams don't measure success at all. These aren't the numbers of a mature discipline. They're the numbers of an industry that knows platform engineering matters but hasn't figured out how to do it.

Goldman Sachs launched a Developer Experience platform with a dedicated executive sponsor (their CIO), a full product team with PMs and UX designers, and adoption incentives tied to performance metrics. They hit 90% adoption in 24 months. But Goldman Sachs has thousands of engineers and a budget to match. That approach doesn't translate to a Series B startup with 30 engineers.

Charity Majors, CTO at Honeycomb, made an observation that reframes this: "AI came for code generation first because it was the easiest problem to solve." The implication is that the harder problems -- developer experience, system understanding, operational coherence -- are next. And when AI comes for those problems, it won't be through a code editor. It'll be through the platform layer.

Which means the platform team question isn't just about human headcount anymore. It's about what primitives the platform needs to support so that agents and humans can work within the same system. 94% of organizations now view AI as critical to the future of platform engineering, according to the State of Platform Engineering Report. The platform layer is where agent governance will live.

Specification engineers: the missing role

We think there's a role that doesn't exist on most org charts but should: the specification engineer.

This isn't a new title for "technical writer" or "architect" or "product manager." It's a distinct function. The specification engineer maintains the machine-readable identity of each software system (boundaries, constraints, integration contracts, architectural decisions, domain rules) as persistent artifacts that both agents and humans reference.

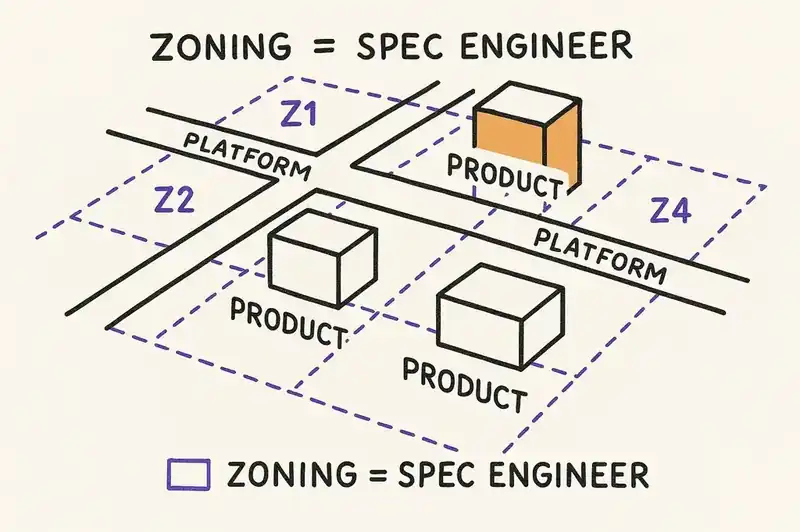

Think of it this way. A platform engineer builds the roads. A product engineer builds the buildings. A specification engineer maintains the zoning code. They don't write the software and they don't build the infrastructure. They define what kinds of software can be built, where, and under what constraints. And they keep those definitions current as the system evolves.

In a small org, this might be a hat worn by a senior engineer or an architect. In a larger org, it's a dedicated role or team. The work includes:

- Translating architectural decisions into machine-readable specifications that agents can consume

- Maintaining system boundary definitions that constrain where agents can and can't make changes

- Defining integration contracts between services that agents must respect

- Encoding domain rules that would otherwise exist only in the team's collective knowledge

- Validating that agent-generated changes conform to specifications before human review

This role sits between architecture, product, and platform. It doesn't replace any of them. It fills a gap that didn't matter when humans wrote all the code (humans could hold the context) but matters a great deal when agents write half of it (agents can't).

Why you need a governance layer

The platform team builds the infrastructure. The product teams build the features. The specification engineers define the constraints. But who enforces them?

In the human-only org, enforcement was social. The architect pushed back in code review. The tech lead rejected PRs that violated patterns. The senior engineer said "we don't do it that way" in standup. Social enforcement works when the violators are humans who learn from feedback.

Agents don't learn from standup. They don't internalize feedback between sessions (not yet, anyway). Every invocation starts from the same baseline. If the constraint isn't in the context, it doesn't exist. If the specification isn't referenced, it's irrelevant.

So you need a governance layer, something between the agent and the codebase that enforces the constraints the specification engineers define. Not a linter (that's syntax). Not a test suite (that's behavior). A layer that validates intent, architecture, and system coherence.

This is the layer that connects the platform team's infrastructure, the specification engineer's constraints, and the product team's intent into a coherent system of governance. Without it, you have three groups doing their jobs independently and hoping the results cohere. Sometimes they do. Increasingly, they don't.

The governance layer needs to be persistent (it outlives any single agent session), machine-readable (agents can reference it), version-controlled (it evolves with the system), and authoritative (it's the source of truth for what this software is supposed to be).

A practical transition framework

Most of you aren't starting from scratch. You have an existing org with existing processes and roles. Here's a transition framework, in roughly the order we'd recommend.

Phase 1: Make intent explicit (months 1-3). Before changing any roles or hiring anyone new, start writing down the things that currently live in people's heads: architectural decisions, system boundaries, integration contracts, domain rules. Not in a wiki that'll drift. In structured, version-controlled artifacts that sit alongside the code.

You don't need a specification engineer for this. Your existing senior engineers and architects can do it. The output doesn't have to be perfect or machine-readable yet. The goal is to surface the implicit knowledge that agents are currently violating because nobody told them it existed.

Concrete deliverables: an ADR (Architecture Decision Record) practice if you don't have one. A system boundary document for each service. Integration contract definitions between services. A "constraints" file in each repository that lists the architectural rules for that codebase.

Phase 2: Restructure review around intent (months 2-5). Change your code review process to explicitly ask: does this change match the declared intent? Every PR needs a clear statement of intent beyond "fixes bug" or "adds feature." Reviewers should be comparing the implementation against the specification, not just evaluating the code in isolation.

Start tracking how often agent-generated code violates declared architectural constraints. This gives you a baseline for how much governance debt you're carrying and where the specification gaps are.

Concrete deliverables: PR template that requires intent statement and relevant specification references. A weekly tally of specification violations found in review. A feedback loop from review to specification, so when a reviewer catches a violation, the specification gets updated to be more explicit.

Phase 3: Introduce the specification function (months 4-8). By now you have written specifications and data on where agents violate them. Assign someone (a senior engineer, an architect, a tech lead) to own the specification practice. Their job: keep the specs current, close the gaps that review data reveals, and start making them machine-readable so agents can reference them directly.

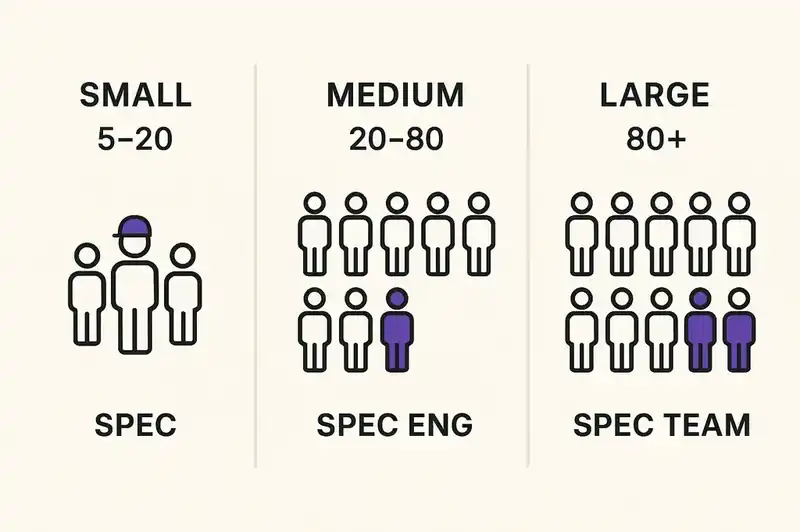

In a small org (under 30 engineers), this is probably 20-30% of one person's time. In a medium org (30-100), it might be a full-time role. In a large org (100+), it's a team.

Concrete deliverables: machine-readable specification format adopted across the org. Agent prompts and configurations that reference specifications. Measurable reduction in specification violations found in review.

Phase 4: Connect the governance layer (months 6-12). This is where the platform team (if you have one) or your DevOps/infrastructure function connects the specifications to the CI/CD pipeline. Specification validation becomes part of the build. Agent-generated PRs are automatically checked against declared constraints before a human ever sees them.

The goal is to shift governance from social enforcement (humans catching violations in review) to systematic enforcement (violations caught automatically before review). This frees reviewers to focus on harder questions (does this solve the right problem? is the approach sound?) instead of catching architectural violations the agent didn't know about.

Concrete deliverables: specification validation in CI. Automated pre-review checks for agent-generated code. Dashboard tracking specification coverage and violation rates.

Three org models for the agent era

What the resulting org might look like at different scales:

Small team (5-20 engineers). No dedicated platform team or specification engineer. Every senior engineer spends about 20% of their time on specification work. One engineer is the "specification owner" who reviews and maintains consistency. Agent governance is lightweight: constraints files in repos, basic CI validation, intent-focused review templates. The team leans on external platforms rather than building internal ones.

Medium team (20-80 engineers). One or two dedicated specification engineers, possibly combined with an architecture role. A small platform function (2-4 people) that includes specification infrastructure in its scope. Specifications are machine-readable, CI validates against them, and the review process is structured around intent validation. Platform team focuses on developer experience and agent integration rather than building everything from scratch.

Large team (80+ engineers). Dedicated specification engineering team (2-5 people) reporting to the architecture or platform function. Full platform team (5-15 people) with dedicated product management. Specifications cover system boundaries, integration contracts, domain rules, and architectural constraints. The governance layer is a first-class platform service. Specification engineers work directly with product teams to translate requirements into constraints before agents generate code.

In all three models, the same thing holds: someone has to own the explicit declaration of what the software is supposed to be. In the human-only org, that declaration was distributed across the team's collective knowledge. In the agent era, it has to be an artifact.

Caveats

Human developers aren't being replaced. The 41% figure means agents are generating code, not engineering software. The gap between those two activities is enormous, and it's where all the interesting work lives now.

Not every org needs to hire specification engineers immediately. Most teams should start by making their existing implicit knowledge explicit. The role emerges from the practice.

Platform engineering isn't dead or irrelevant, but its scope is expanding. A platform team that only thinks about CI/CD pipelines and Kubernetes clusters is solving last decade's problem. The platform team of the agent era also needs to provide the primitives for agent governance, which means specification infrastructure, identity layers, and intent validation.

And we don't have this figured out. The org chart for the agent era is being drawn in real time, by teams making it up as they go. The ones doing it best share one trait: they've stopped pretending that adding AI tools to a human-designed process is sufficient. They're redesigning the process.

What has to be explicit now

The engineering org designed for human-only development evolved over decades into a sophisticated system for coordinating human intelligence. Code review, sprint planning, architectural governance, technical debt management — these practices emerged because they solved real coordination problems between human contributors.

Those coordination problems have not gone away. They have multiplied. You now have the same human coordination challenges plus a new class: coordinating humans with agents, coordinating agents with each other, and maintaining system coherence when half the implementation comes from sources that do not understand the system.

The orgs that thrive here will recognize the shift and design for it. Not by adding "AI" to their existing org chart, but by rethinking what the org chart is for. The roles change. The review process changes. The governance model changes. The platform layer changes. What does not change is the need for someone, somewhere, to hold and enforce a coherent vision of what the software is supposed to be.

That used to be implicit. Now it has to be explicit.

Somebody has to own what the software is supposed to be. We're building the tooling that lets that declaration live outside anyone's head.