Gartner predicted that by 2026, 80% of large software engineering organizations would have platform engineering teams, up from 45% in 2022. The trajectory is steep because the problem platform teams solve -- reducing cognitive load on developers while maintaining organizational standards -- gets worse every year as systems get more complex.

Now add AI agents to the picture. The State of Platform Engineering Report Volume 4, released in January 2026, found that 94% of organizations view AI as critical to the future of platform engineering. Not "interesting" or "worth exploring." Critical.

This creates a convergence that most organizations have not yet recognized: platform teams are the natural governance layer for AI agents. The platform team already owns the thing that agent governance requires -- a shared layer of standards, tooling, and guardrails that development teams consume.

Why platform teams, specifically

The argument is structural, not political.

Platform engineering emerged because the DevOps model -- "you build it, you run it" -- scaled poorly. Individual teams took on too much cognitive load: infrastructure provisioning, CI/CD pipelines, security scanning, observability, compliance documentation. Quality was uneven, duplication was massive, and every team solved the same problems independently.

Platform teams fixed this by creating a shared layer: an Internal Developer Platform (IDP) that provides reusable services, components, and tools. Developers consume the platform instead of building their own infrastructure from scratch. The platform encodes organizational standards (security policies, deployment procedures, monitoring requirements) so that following the standard is easier than deviating from it.

This is exactly the pattern that AI agent governance needs. Without a shared layer, every team runs its own agents with its own configurations. The duplication is massive. The quality is uneven. The compliance gaps multiply. The organization pays for the same problems N times.

The platform team already has the organizational mandate to provide that shared layer. They already have the relationships with development teams. They already understand the balance between centralized standards and developer autonomy. Extending the platform to include agent governance is a natural expansion of scope, not a new mandate.

The Netflix and Spotify models

The most successful platform engineering implementations share a philosophy: make the right thing the easy thing. Netflix calls this the "Paved Road." Spotify calls it the "Golden Path." The idea is the same. You do not mandate that teams follow the standard. You make the standard so convenient that teams choose it voluntarily.

Netflix built Wall-E, a security gateway that fronts internal applications with authentication, authorization, logging, and security headers baked in. Developers did not need to implement these controls themselves -- Wall-E handled them. Following security best practices was the default, not a checklist. The paved road was smooth enough that deviating from it required deliberate effort. Wall-E now fronts over 350 applications and adds roughly three new production apps per week.

Spotify embedded their Golden Paths in Backstage, their internal developer portal. Engineers could create new microservices in minutes, with CI/CD, monitoring, logging, and documentation already configured. The golden path was not a document. It was a template -- an executable, opinionated, maintained artifact that encoded institutional knowledge.

Both models share three properties that translate directly to agent governance:

Opt-in, not mandated. Teams choose the paved road because it is better than the alternative. They are not forced onto it. Mandated approaches fail -- shadow IT taught us this, and shadow AI is teaching us again.

Opinionated defaults with escape hatches. The platform makes decisions so individual teams do not have to. But when a team has a legitimate reason to deviate, the platform supports that deviation with guardrails rather than blocking it entirely.

Continuously maintained. The paved road evolves as the organization's understanding evolves. The platform team maintains it, updates it, and keeps it aligned with current practice.

The agent governance layer

Applying the paved road model to AI agents means building a governance layer within the platform that gives agents shared context, consistent standards, and enforceable guardrails.

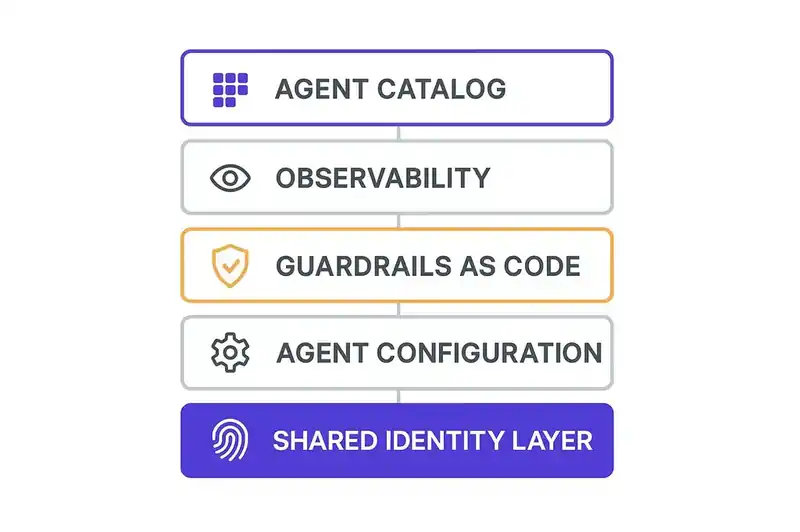

Component 1: A shared identity layer

The foundational component is a persistent, machine-readable declaration of what the software is -- its architecture, its standards, its constraints, its compliance requirements, its relationships to other systems. This identity layer serves the same function for agents that the IDP serves for developers: it is the shared context that makes individual decisions consistent with organizational standards.

Without a shared identity layer, every team writes its own rules files, its own agent configurations, its own context documents. The fragmentation is already happening. Claude wants CLAUDE.md. Cursor wants .cursorrules. GitHub Copilot wants .github/copilot-instructions.md. Windsurf wants .windsurf/rules. Each tool gets its own version of the truth, maintained independently, drifting at its own pace.

The platform team is the natural owner of the shared identity layer because they already own the shared standards layer. The identity layer is the standards layer made machine-readable. The coding standards, the security policies, the architectural patterns, the compliance requirements -- the platform team already maintains these. Making them available to agents in a structured format is an extension of existing work, not a new category.

Component 2: Agent configuration as platform service

Today, teams configure their own agents. They choose the model, set the parameters, write the system prompts, define the allowed actions. Each team does this independently. The result is the same fragmentation that platform engineering was created to solve.

In the paved road model, agent configuration becomes a platform service. The platform provides default agent configurations that encode organizational standards. A "code generation agent" default configuration includes the coding standards, the security requirements, the testing expectations, the documentation format. Teams consume the default and customize where needed.

This does not mean one agent for the entire organization. It means shared defaults with team-level overrides. The platform provides the baseline. Teams extend it for their specific domain. The configuration is version-controlled, auditable, and traceable -- the same properties that platform teams already enforce for infrastructure configuration.

Component 3: Guardrails as code

Netflix's Wall-E made security the default. The equivalent for agents is making compliance the default.

Guardrails as code means encoding the organization's constraints -- security policies, data handling requirements, deployment procedures, compliance obligations -- in a form that agents consume automatically. The agent does not need to be told "do not write PII to logs." The guardrail prevents it. The agent does not need to be told "require approval for production deployments." The guardrail enforces it.

The platform team already manages guardrails for infrastructure (network policies, IAM configurations, resource limits) and for CI/CD (required checks, approval gates, security scans). Agent guardrails are a third category of the same type. They are policies encoded as configuration, managed by the platform, consumed by the tools that developers use.

Component 4: Observability for agents

Platform teams own observability. They provide the monitoring, logging, alerting, and dashboarding that development teams consume. Extending this to agents means tracking what agents are doing across the organization.

This is not surveillance. It is the same operational visibility that platform teams provide for any other infrastructure component. What agents are running. What actions they are taking. What code they are generating. Whether their outputs are consistent with the shared identity layer. Where the drift is occurring.

The observability data serves multiple stakeholders. Engineering leaders get visibility into agent adoption and effectiveness. Security teams get visibility into agent access patterns and anomalies. Compliance teams get the audit trail they need to demonstrate governance. FinOps teams get the cost attribution they need to manage spending.

Microsoft's February 2026 report on Fortune 500 AI agent adoption emphasized that the organizations succeeding with agents are the ones that have "observability, governance, and security" integrated into their agent infrastructure. That integration is the platform team's job.

Component 5: The agent catalog

Spotify's Backstage includes a software catalog -- a registry of all services, their owners, their dependencies, and their metadata. The agent equivalent is a catalog of all agents operating within the organization: what they do, who maintains them, what access they have, what configuration they use, and what guardrails apply.

Without a catalog, the organization cannot answer basic questions. How many agents are we running? What are they doing? Who approved them? What data do they access? These are not edge case questions. They are the first things an auditor will ask, the first things a FinOps review will require, and the first things a security team needs during incident response.

The platform team maintains the service catalog. The agent catalog is a natural extension. It uses the same patterns: registration, metadata, ownership, dependency tracking. The difference is that the entities in the catalog are agents rather than microservices.

The governance framework in practice

A development team wants to use an AI agent for code generation on their payment processing service. They go to the platform's agent catalog and select a code generation agent with the default configuration. The default includes the organization's coding standards, security policies, and compliance requirements.

The team customizes the configuration for their specific domain: payment-specific patterns, PCI-DSS requirements, integration constraints with the payment gateway. The customization is version-controlled and reviewed through the same process as any other configuration change.

When the agent operates, it does so against the shared identity layer. It knows that this is a payment processing service, that it handles PCI-scoped data, that changes require security review, and that the deployment cadence is weekly with a production approval gate. It knows these things not because someone wrote them in a rules file, but because the identity layer -- maintained by the platform team, consumed via the platform's standard interfaces -- provides them as live context.

The guardrails enforce the constraints automatically. The agent cannot write PCI-scoped data to logs because the guardrail prevents it. The agent cannot bypass the approval gate because the guardrail enforces it. Compliance is the default, not a manual checklist.

The observability layer tracks the agent's activity: what code it generated, what specifications it operated against, what guardrails it triggered. This data feeds into the compliance dashboard that the platform team maintains, providing the audit trail that the EU AI Act and the Colorado AI Act require.

When the FinOps team reviews AI spending, they can attribute the cost of this agent's activity to the payment processing team, to the code generation use case, and to the production deployment. The attribution void -- the inability to see where money is going -- is closed.

The organizational dynamics

The hardest part is organizational, not technical. Platform teams already have full plates. Adding agent governance to their scope requires investment in people, tooling, and prioritization.

The argument for that investment is the same argument that justified platform engineering in the first place: the alternative is worse. Without a shared governance layer, every team builds their own, and the duplication, inconsistency, and compliance gaps all cost more. A Vanson Bourne survey found that 72% of IT and financial leaders say AI spending has become unmanageable. That unmanageability is the cost of not having a governance layer.

Gartner's prediction that over 40% of agentic AI projects will be canceled by end of 2027 -- citing escalating costs, unclear business value, and inadequate risk controls -- is a prediction about organizations that did not build the governance layer. The projects that survive are the ones where costs are attributable, value is measurable, and risk controls are in place. Those are platform team deliverables.

The organizational design question is whether agent governance is a new responsibility for the existing platform team or a new team that partners with the platform team. Both models work. What does not work is having nobody own it.

The maturity model

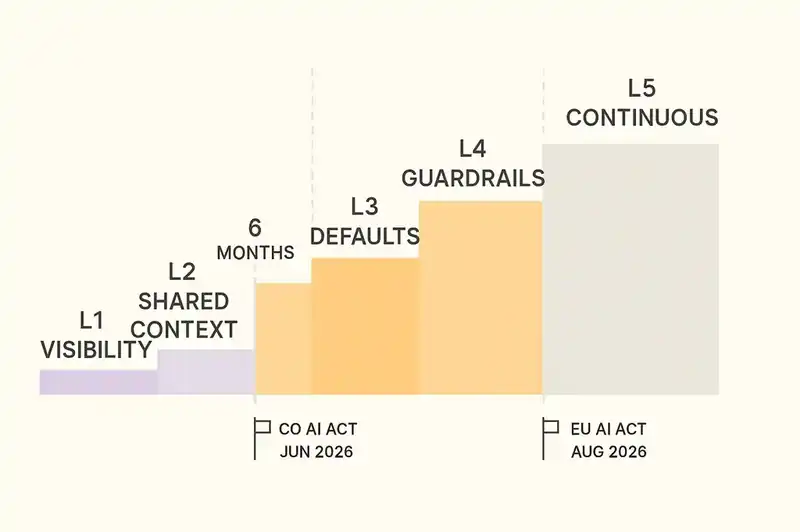

Not every organization needs all five components on day one.

Level 1: Visibility. Implement the agent catalog and basic observability. Know what agents are running, who is running them, and what they cost. This is the minimum viable governance and the prerequisite for everything else.

Level 2: Shared context. Implement the shared identity layer for high-risk systems. Give agents operating on critical services access to the truth about what those services are, what standards they follow, and what constraints apply. This addresses the most dangerous governance gap -- agents operating on sensitive systems without context.

Level 3: Default configurations. Implement agent configuration as a platform service. Provide paved-road defaults that encode organizational standards. Let teams consume the defaults and customize where needed. This addresses the duplication and inconsistency that drive up costs.

Level 4: Automated guardrails. Implement guardrails as code for compliance-critical constraints. Make the right thing automatic. This addresses the compliance gaps that create legal exposure.

Level 5: Continuous governance. Full integration of agent governance into the platform's operational model: continuous monitoring, drift detection, automated compliance reporting, cost attribution. This is the steady state -- the governance layer operating as part of the platform, maintained and evolved alongside everything else the platform provides.

Most organizations should aim to reach Level 2 within six months and Level 4 within twelve months. The regulatory deadlines -- EU AI Act in August 2026, Colorado AI Act in June 2026 -- define the timeline for compliance-critical capabilities. The FinOps pressure defines the timeline for cost attribution. The organizational pressure from agent sprawl defines the timeline for everything else.

The convergence

Platform engineering and AI agent governance are converging because they solve the same structural problem: how do you maintain organizational standards when individual teams have the autonomy to make their own technical decisions?

Platform engineering solved this for infrastructure. The paved road model -- opinionated defaults, opt-in adoption, continuous maintenance, escape hatches with guardrails -- works. It reduced duplication, improved consistency, and lowered cognitive load while preserving developer autonomy.

That model applies to agents too, and the team that already runs the platform can run it. The only difference is the layer of abstraction: instead of governing how developers provision infrastructure and deploy code, you are governing how agents understand software and generate outputs.

Platform teams are the natural governance layer for AI agents. The framework exists, and the organizational mandate exists. The 94% of organizations that view AI as critical to platform engineering's future are already sensing this convergence. The ones that act on it will have an advantage -- not because they adopted agents first, but because they governed agents coherently from the start.

The paved road needs a new lane.