There is a bug in every software organization on earth. It has no stack trace. No one has filed a ticket for it, because no one knows how to describe it. The bug is this: most software does not know what it is supposed to be.

That gap -- between what a system is and what it should be -- costs more than any null pointer, any race condition, any off-by-one error in history. It is the most expensive defect class in the industry. We have been treating its symptoms for decades while ignoring the cause.

The price tag

In 2018, Stripe partnered with Harris Poll to survey over 1,000 developers and 1,000 C-level executives across five countries. The resulting report, The Developer Coefficient, put a number on developer inefficiency: $300 billion in global GDP lost annually. Of that, $85 billion was attributable specifically to time spent dealing with bad code -- debugging, refactoring, patching, and modifying software that should not have broken in the first place.

The average developer in that study worked 41.1 hours per week. Of those hours, 17.3 went to maintenance: debugging and refactoring. That is 42% of a developer's working life spent not building, but fixing.

Other estimates are higher. A 2013 study from Cambridge Judge Business School, commissioned by Undo, estimated global debugging costs at $312 billion annually. Coralogix pegged the US figure alone at $113 billion spent on identifying and fixing product defects. The NIST, back in 2002, calculated $59.5 billion for the US economy -- and that was before cloud computing, microservices, and the explosion of distributed systems made the debugging problem exponentially harder.

The numbers vary depending on methodology. The pattern does not. We are spending an enormous share of our engineering capacity on understanding and fixing things that already exist, rather than building things that do not.

Where the time actually goes

The part that gets overlooked: the fix is usually not the hard part.

When a developer picks up a bug, the majority of their time is not spent writing the patch. It is spent understanding the context. What does this code do? What was it supposed to do? What depends on it? What happens if I change it?

Research on program comprehension consistently finds that developers spend between 30% and 70% of their total working time simply reading and understanding existing code. Minelli et al. found the figure closer to 70% by tracking IDE interactions. A large-scale field study by Xia et al., which measured activity across all applications, put it at 58%. The exact number depends on the study, the codebase, and the developer's experience. But the range is damning regardless of where you land in it.

Thirty to seventy percent. Not writing code. Not running tests. Not deploying. Just trying to figure out what the system is, what it does, and why it does it that way.

This is the hidden tax. It does not show up in sprint velocity charts or DORA metrics. There is no Jira status for "developer staring at code trying to understand why this module exists." But it is the largest time sink in professional software development, and it has been for forty years. The first studies measuring code comprehension time date to the 1980s. We have known about this problem for four decades. We have not solved it.

The bug report that never gets written

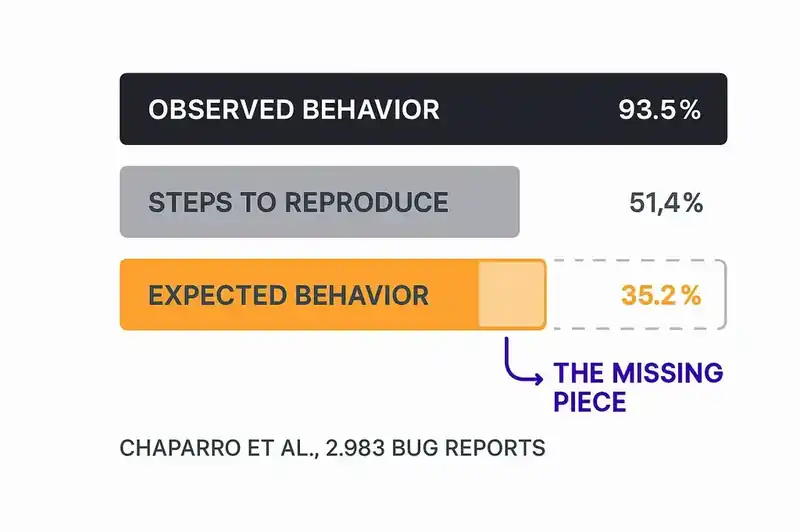

In 2017, Oscar Chaparro and colleagues published a study at ESEC/FSE analyzing 2,983 bug reports from open-source projects. They examined three components of an effective bug report: the observed behavior (what happened), the steps to reproduce (how to trigger it), and the expected behavior (what should have happened instead).

93.5% of reports described the observed behavior. That makes sense -- something broke, so you describe the breakage. 51.4% included steps to reproduce. Reasonable, though not great.

But only 35.2% described the expected behavior.

Nearly two-thirds of all bug reports fail to state what the software was supposed to do. The most critical piece of information for resolving a defect -- the specification of correct behavior -- is missing from the majority of filed tickets.

That is not laziness. It is an absence of knowledge. Developers do not describe expected behavior because, in many cases, no one has declared it. There is no authoritative source that says "this is what the system should do." There is code (which describes what the system does), there is documentation (which describes what the system did, at some point in the past, maybe), and there is institutional memory (which walks out the door every time someone leaves the company).

When expected behavior is undeclared, every bug becomes a philosophical debate. Is this actually a bug, or is it working as designed? Designed by whom? According to what specification? The one in the Confluence page that hasn't been updated since Q3 of last year? The one in the product manager's head? The one that the original author understood but never wrote down?

This is the bug nobody files: the absence of declared intent.

The compounding cost of ambiguity

The downstream effects of this gap are measurable and ugly.

Triage is a coin flip. When humans classify bug severity manually, accuracy ranges from 60% to 70%. That is not a quality problem. It is an information problem. Without a clear specification of what the software should do, severity is a judgment call, and judgment calls made under ambiguity are wrong a third of the time.

Duplicates pile up. Research consistently finds that 10% to 30% of bug reports in open repositories are duplicates. Different people encounter the same symptom, describe it differently, and file separate tickets -- because there is no shared reference for what "correct" looks like. If you had a declared specification, duplicate detection becomes a comparison against a known standard rather than a fuzzy text-matching exercise.

CI failures become archaeological expeditions. Undo's research found that the average time to find and fix a single CI failure is 13 hours. Thirteen hours. Not because the fix is complex, but because understanding the failure requires reconstructing the context: what was this test checking, what changed, what was the intended behavior, who owns this, what depends on it. Without declared identity, every CI failure is a cold start.

Maintenance swallows the roadmap. The 42% figure from Stripe is an average. For legacy systems, it is worse. For systems with high personnel turnover, it is worse. For systems being modified by AI agents that have no institutional memory at all, it is worst of all. Every hour spent understanding context is an hour not spent shipping features. And the understanding is perishable -- the context a developer painstakingly reconstructed today will be lost when they move to another project next month.

The AI triage paradox

This is where the industry is approaching a solution from the wrong direction.

AI-powered triage tools are getting good. Machine learning systems achieve over 85% accuracy in bug classification and 82% precision in priority prediction, well above the 60-70% accuracy of manual human triage. These are not theoretical results. Sentry's Seer, deployed across 4 million developers and 140,000 organizations, is automating root-cause analysis and even generating pull requests to fix issues. GitHub's Copilot coding agent, generally available since September 2025, can be assigned an issue and will autonomously produce a fix in a draft PR.

The tools work. The problem is what they are comparing against.

An AI triage system classifies a bug by reading the report, analyzing the code, and predicting severity, assignment, and resolution. But "severity" relative to what? "Correct behavior" according to what specification? The AI is pattern-matching against historical data -- past bugs, past resolutions, past code patterns. It is learning what "normal" looks like by observing what exists, not by consulting a declaration of what should exist.

This works reasonably well for obvious failures: crashes, exceptions, clear regressions. It works poorly for the subtle, expensive bugs -- the ones where the system does something coherent but wrong. Where the behavior is consistent but violates an unstated invariant. Where the code is doing exactly what it was written to do, and what it was written to do is not what the business needs.

AI triage tools achieving 85% accuracy is impressive. But the ceiling on that accuracy is determined by the quality of the specification they can compare against. When the specification is implicit -- scattered across documentation, test suites, tribal knowledge, and vibes -- 85% might be close to the maximum. The remaining 15% is not a model quality problem. It is a data problem. The source of truth does not exist in a form the model can read.

The delta, not the diagnosis

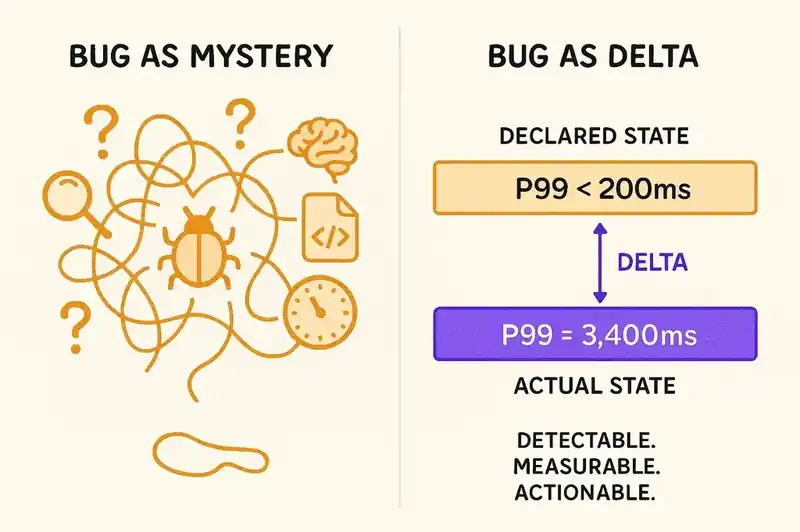

We keep treating bugs as things to be found and fixed. The entire mental model of debugging is reactive: something broke, go figure out what and repair it. The toolchain reflects this. Error monitoring. Log aggregation. Stack trace analysis. Root cause investigation. Post-mortem documentation. All of it assumes that the correct state is knowable after the fact, if you look hard enough.

But what if we started from the other direction?

What if every piece of software had a declared identity -- a machine-readable specification of what it is, what it should do, what constraints it must honor, and how it relates to other systems? Not documentation. Not tests. Not ADRs. A living, versioned, continuously reconciled declaration of intent.

In that world, a bug is not a mystery to be investigated. It is a delta. A measurable gap between declared state and actual state. The system says it should respond to this API call with idempotent behavior and P99 latency under 200ms. It is not doing that. The delta is specific, measurable, and automatically detectable.

You do not need a developer to spend six hours reconstructing context. The context is declared. You do not need a bug reporter to describe expected behavior. The expected behavior is specified. You do not need a human to guess at severity. The severity is calculable: how far is actual state from declared state, and which constraints are being violated?

The pattern is proven. Kubernetes does this for infrastructure. You declare desired state in a manifest. A reconciliation loop detects drift. When actual state diverges from declared state, the system corrects it or alerts. HashiCorp built a $6.4 billion company on this pattern for infrastructure provisioning. The entire GitOps movement is built on the same principle: declare what should exist, continuously verify that it does.

We have this pattern for servers, containers, networks, and cloud resources. We do not have it for the software itself.

What changes when identity exists

Consider what happens to the numbers when software has declared identity.

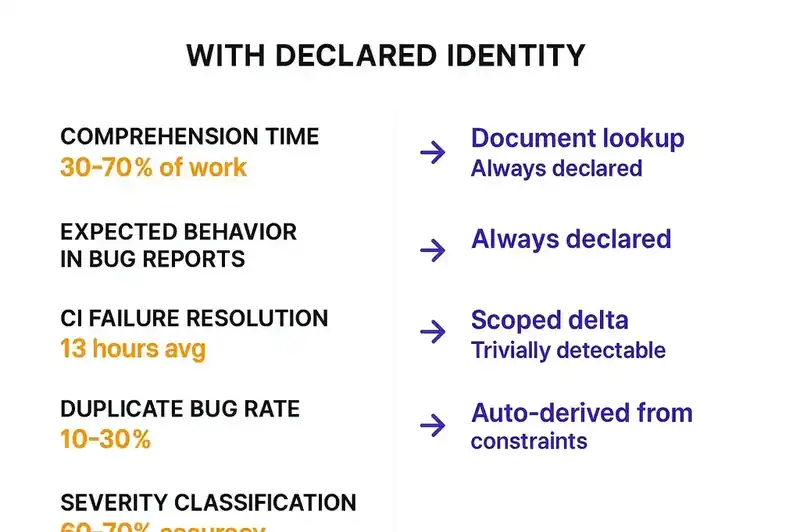

The 30-70% of time spent on program comprehension drops, because the answer to "what does this system do and why" is not buried in the code. It is declared in the identity layer. A developer -- or an agent -- can read the specification before reading the implementation. Context acquisition goes from archaeological dig to document lookup.

The 35.2% expected-behavior rate in bug reports becomes irrelevant, because expected behavior is not something a reporter needs to supply. It is already declared. The bug report becomes: "actual behavior diverges from declared behavior." The system can generate that report automatically.

The 13-hour average to resolve a CI failure shrinks, because the failure is not a mystery. It is a failed reconciliation check. The test was derived from the specification. The specification says what should be true. The test says it is not true. The delta is known. The fix is scoped.

The 10-30% duplicate rate drops, because duplicates are detectable by comparing against a single source of truth rather than by fuzzy-matching report text. Two reports about the same delta against the same specification are trivially identifiable as duplicates.

The 60-70% accuracy of manual severity classification is replaced by something better: automatic severity derivation. A violation of a PCI-DSS compliance constraint is critical by definition. A deviation from a P99 latency SLO is quantifiable. You do not need a human to guess. You need a system to measure.

And the AI triage tools that currently achieve 85% accuracy? They get a source of truth to compare against. Not historical patterns. Not implicit norms inferred from code. An explicit, machine-readable declaration of what the software should be. The ceiling on their accuracy rises because the input quality rises.

The specification is the product

Stop treating specifications as project management artifacts that get written at the beginning and forgotten by the middle. The specification is the product.

Code is an implementation of intent. Tests are a verification of intent. Documentation is a (usually stale) description of intent. But the intent itself -- what the software is, what it should do, what it must not do -- has no artifact. It lives nowhere persistent, nowhere machine-readable, nowhere continuously verified.

Kent Beck started governing AI agents with structured plan files. Andrej Karpathy moved from "vibe coding" to agentic engineering in twelve months. Simon Willison identified November 2025 as the inflection point where AI agents crossed from "mostly works" to "actually works" -- and immediately observed that the better agents get, the more you need structured oversight. InfoQ described the emerging pattern as "a declarative, contract-centric control plane."

These trajectories all point to the same conclusion: as AI agents generate more code, the value shifts from the code to the specification. The specification is what makes the code auditable, verifiable, and governable. Without it, you are debugging in the dark -- which is exactly what we have been doing for forty years, at a cost of $85 billion per year, on just the bad-code portion of the problem.

The ticket that needs filing

The $85 billion is not a code quality problem. It is an identity problem.

We do not spend 42% of our time on maintenance because developers are careless or because code is inherently fragile. We spend it because the gap between "what is" and "what should be" is undefined. Every debugging session, every triage decision, every severity assessment, every duplicate detection effort is an attempt to reconstruct, from scattered clues, what the software was supposed to be doing.

That reconstruction is the bug. The most expensive, most pervasive, most unaddressed defect in the industry. And no one is filing a ticket for it, because we have no system to file it against.

The fix is not better debugging tools, faster CI pipelines, or more sophisticated AI triage. Those are improvements to the symptom-management apparatus. The fix is giving software a declared identity -- a persistent, machine-readable, version-controlled specification that defines what the software is, so that every deviation from that identity becomes a measurable, actionable, automatically detectable delta.

The $85 billion bug is the absence of that specification. It is time someone filed the ticket.