An auditor sits across the table from your VP of Engineering. She opens a laptop, pulls up a specific commit -- a financial calculation module deployed to production eight months ago. She asks three questions:

Who wrote this code? What specification was it written against? How did your organization verify it met your stated risk management policy?

Your VP knows the answer to all three. The code was generated by an AI coding agent, working from a Jira ticket description. It was reviewed by a mid-level engineer who approved the PR in eleven minutes. No specification exists. No risk management policy covers AI-generated code. No record links the output to any governance artifact.

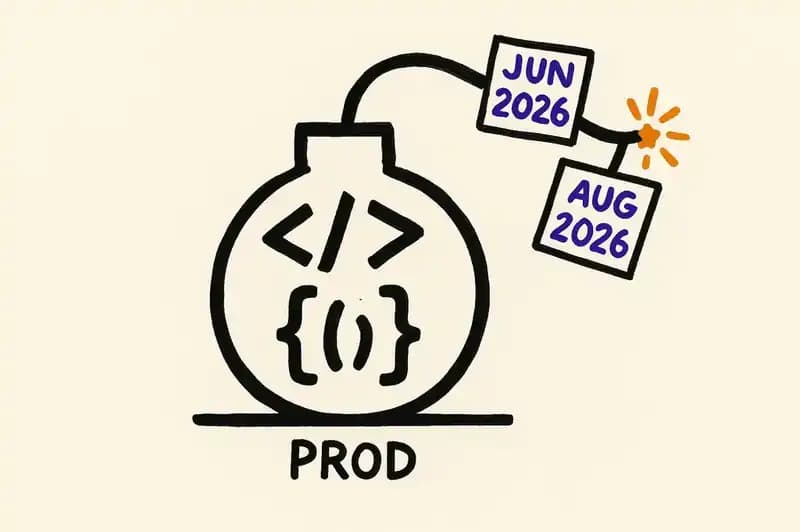

This is not a hypothetical. It's what's coming for every engineering organization that ships AI-generated code into regulated domains. The deadlines are on the calendar.

The regulatory landscape, with dates

Two major enforcement milestones are approaching. Neither is getting the attention it deserves in engineering organizations.

Colorado AI Act (SB 24-205): June 30, 2026. Colorado becomes the first US state to enforce comprehensive AI accountability requirements. Originally set for February 2026, the enforcement date was pushed back five months after Governor Polis signed SB 25B-004 in August 2025. The law requires both developers and deployers of "high-risk AI systems" to exercise "reasonable care" to prevent algorithmic discrimination. Deployers must adopt risk-management policies, perform impact assessments, and maintain consumer-facing disclosures. Violations are treated as unfair trade practices under the Colorado Consumer Protection Act, with penalties of up to $20,000 per violation, counted separately for each affected consumer. The Colorado Attorney General has exclusive enforcement authority, with a 60-day cure period after notice.

EU AI Act: August 2, 2026. The full enforcement of the EU AI Act takes effect, including all requirements for high-risk AI systems under Annex III. This covers systems used in critical infrastructure, employment, education, essential services, law enforcement, and border control. Obligations include risk management systems, data governance, technical documentation, record-keeping, transparency, human oversight, accuracy, robustness, and cybersecurity. The penalty structure is tiered: up to 35 million EUR or 7% of global annual turnover for prohibited practices, up to 15 million EUR or 3% for high-risk system violations, and up to 7.5 million EUR or 1% for supplying incorrect information to authorities. As of March 2026, only 8 of 27 EU member states have designated national competent authorities -- meaning enforcement will be uneven but still legally binding.

These are the two that have dates. They are not the only ones in motion. Multiple US states have AI legislation pending. The NIST National Cybersecurity Center of Excellence published a concept paper in February 2026 titled "Accelerating the Adoption of Software and AI Agent Identity and Authorization," exploring standards-based approaches to identify, manage, and authorize actions taken by AI agents. The comment period closed April 2, 2026. This is the US federal government signaling that agent identity and authorization are now policy-level concerns.

The question stopped being "will AI-generated code face governance requirements?" sometime last year. The question now is whether your organization will have the infrastructure to meet them when the auditor shows up.

What the risk taxonomy actually looks like

While regulators were drafting requirements, the security community was mapping threats.

In December 2025, the OWASP GenAI Security Project released the Top 10 for Agentic Applications -- the first formal taxonomy of risks specific to autonomous AI agents. It was developed with input from over 100 security researchers and industry practitioners. The full list:

- Agent Goal Hijacking -- attackers redirect agent objectives through manipulated instructions, tool outputs, or external content

- Tool Misuse and Exploitation -- agents use legitimate tools in unsafe ways due to ambiguous prompts or manipulated input

- Identity and Privilege Abuse -- agents operate with excessive permissions or impersonate other entities

- Agentic Supply Chain Vulnerabilities -- compromised dependencies, plugins, or third-party agent components

- Unexpected Code Execution -- agents generate and execute code outside intended boundaries

- Memory and Context Poisoning -- adversarial manipulation of an agent's persistent memory or context window

- Insecure Inter-Agent Communication -- spoofed or manipulated messages between cooperating agents

- Cascading Failures -- false signals propagating through automated pipelines with escalating impact

- Human-Agent Trust Exploitation -- polished agent explanations misleading human operators into approving harmful actions

- Rogue Agents -- compromised or misaligned agents that act harmfully while appearing legitimate

Notice that three of the top four risks are about identity, tools, and delegated trust boundaries. That's what happens when you deploy autonomous systems without identity infrastructure.

On April 2, 2026, Microsoft released the Agent Governance Toolkit as an open-source project -- a seven-package, multi-language system claiming to address all ten OWASP agentic risks. It includes a policy engine that intercepts agent actions before execution, agent-to-agent communication security, execution sandboxing, and automated compliance grading. Available for Python, Rust, TypeScript, Go, and .NET.

This is the taxonomy auditors and insurance underwriters are going to use. It's not speculative anymore.

What current processes miss

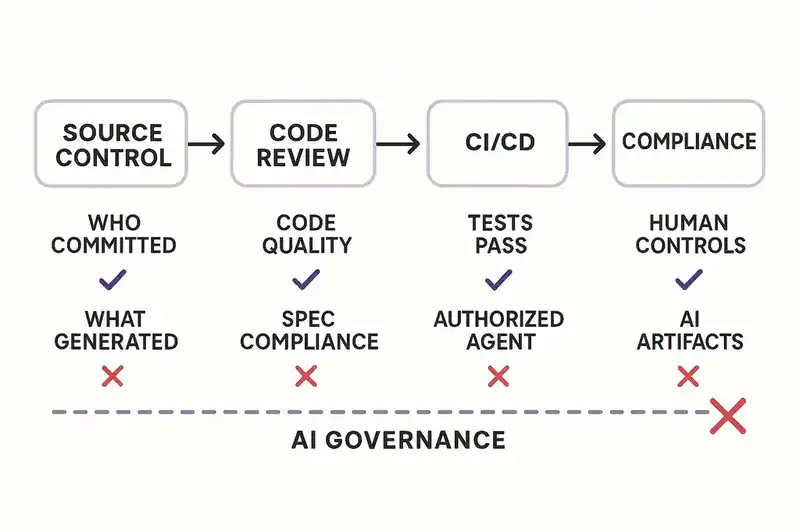

What do most engineering organizations currently have in place for governing AI-generated code? Nothing. That sounds aggressive, but walk through the standard SDLC and look for where AI governance actually lives.

Source control. Git tracks who committed code. It has no concept of what wrote it. When an AI agent generates a function and an engineer commits it, the git log says the engineer is the author. No metadata distinguishes AI-generated code from human-authored code. No record of the prompt, the model version, or the context that produced it.

Code review. PR reviews assess code quality. They say nothing about provenance, specification compliance, or risk classification. A reviewer approves a diff. They don't attest that the code was generated in compliance with a risk management policy -- because no such policy exists for them to attest against.

CI/CD pipelines. Tests verify behavior. They don't verify that the behavior matches a declared specification, or that the code was generated by an authorized agent, or that the generation process met governance requirements. A USENIX Security 2022 study analyzing 447,238 workflows across 213,854 GitHub repositories found that 99.8% of workflows are overprivileged with read-write repository access instead of read-only. The pipeline's own security posture is weak. AI governance isn't on the roadmap.

Compliance frameworks. SOC 2, ISO 27001, HIPAA -- these require documented controls, access management, and audit trails. They were designed for human-operated systems. When an AI agent writes code, selects dependencies, and proposes infrastructure changes, which controls apply? Who's the responsible party? Where's the audit trail? SOC 2 compliance alone costs $30,000 to $150,000 per cycle for a Type 2 certification. Organizations are spending that money on frameworks that have no mechanism for AI-generated artifacts.

No stage of the current SDLC captures what regulators are going to require: what agent generated this code, under what authority, against what specification, with what constraints, and who approved both the agent's involvement and its output.

The security data makes it worse

The governance gap would be bad enough on its own. But the code these agents are producing isn't clean.

Veracode's 2025 GenAI Code Security Report analyzed code generated by over 100 large language models across Java, JavaScript, Python, and C#. The findings: 45% of code samples failed security tests and introduced OWASP Top 10 vulnerabilities. AI tools failed to defend against cross-site scripting in 86% of relevant code samples. Log injection went undefended 88% of the time. Java was the worst performer, with a 72% security failure rate across tasks. Veracode's Spring 2026 update confirmed that these numbers have barely moved.

Meanwhile, adoption is not slowing down. GitHub's survey of 500 US-based developers (conducted with Wakefield Research) found that 92% use AI coding tools at work and for personal projects. The Stack Overflow 2025 Developer Survey reported 84% of developers using or planning to use AI tools. More recent 2026 data shows 73% of engineering teams using AI coding tools daily -- up from 41% in 2025.

So: nearly universal adoption. Nearly half of generated code carrying security vulnerabilities. Zero governance infrastructure. Regulatory enforcement arriving in months.

That's the time bomb.

What audit-ready AI development looks like

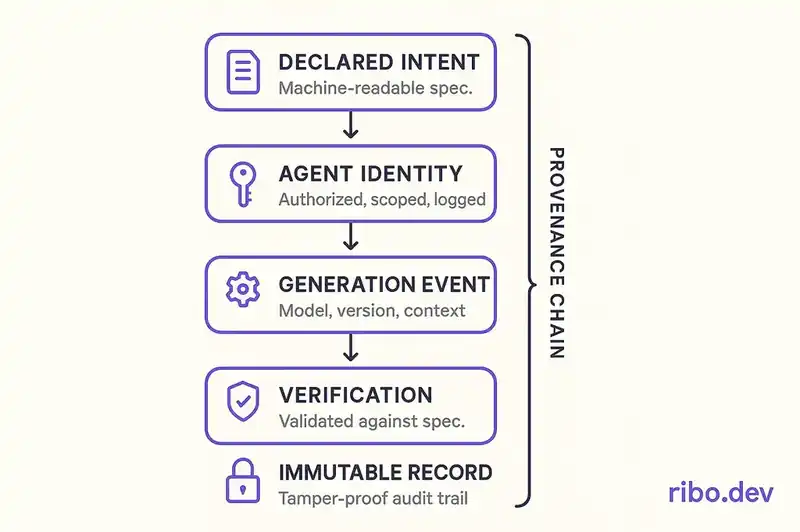

Strip away the legal language and the regulatory requirements ask for something simple: demonstrate that your organization had a process, followed it, and can prove it.

For AI-generated code, that means every artifact needs to answer questions that today go unasked. Not just "does this code work?" but "what was this code supposed to do, what agent produced it, what constraints governed that agent, and how was the output verified against the stated intent?"

This is a provenance problem. Provenance requires identity -- not just for humans, but for the software artifacts themselves.

Declared intent before generation. Before an AI agent writes code, a machine-readable specification of what it should produce needs to exist. Not a Jira ticket. Not a Slack message. A structured artifact describing expected behavior, constraints, architectural boundaries, and risk classification. This is what the auditor will ask for.

Agent identity and authorization. The NIST NCCoE concept paper identifies four focus areas: identification (distinguishing AI agents from human users), authorization (applying standards like OAuth 2.0 to agent actions), access delegation (linking user identities to AI agents for accountability), and logging (linking specific agent actions to their non-human entity). Federal guidance is coalescing around this framework now.

Continuous verification against specification. CI/CD pipelines need to verify not just that code passes tests, but that it conforms to its declared specification. "The tests pass" is behavioral. "The code does what the specification says it should do" is contractual. Auditors care about the second one.

Immutable audit trails. Every generation event, agent action, approval, and modification needs to produce a record that can't be retroactively altered. The record links the output to the specification, the agent, the human who authorized the agent, and the verification result.

Risk classification at the artifact level. A UI color change and a financial calculation carry different regulatory exposure. Governance infrastructure needs to classify artifacts by risk level and apply proportionate controls. High-risk artifacts get stricter specifications, more rigorous verification, deeper audit trails.

This is what a declarative identity layer does: it makes software self-describing, so that "what is this, who made it, and why?" has a machine-readable answer at every layer of the stack.

Compliance readiness checklist

If you lead an engineering team, run through this inventory. Be honest with yourself.

Provenance and attribution

- Can you identify which code in your codebase was AI-generated versus human-authored?

- Do you record the model, version, and prompt context for AI-generated code?

- Can you trace a deployed artifact back to a specific generation event?

Specification and intent

- Do AI coding agents work against structured specifications, or free-form prompts?

- Is there a machine-readable link between a specification and the code it produced?

- Can you demonstrate that generated code was verified against its specification?

Agent governance

- Do you have a policy governing which AI agents can generate production code?

- Are agent permissions scoped to specific repositories, languages, or risk levels?

- Is there an audit trail of agent actions distinct from human actions?

Risk classification

- Are code artifacts classified by regulatory risk level?

- Do high-risk artifacts receive different governance than low-risk artifacts?

- Can you demonstrate proportionate controls to an auditor?

Verification and review

- Does your review process distinguish between AI-generated and human-authored code?

- Do reviewers attest to specification compliance, not just code quality?

- Are security scans calibrated for known AI code vulnerability patterns?

Audit readiness

- Can you produce, on demand, the full provenance chain for any deployed artifact?

- Do your compliance frameworks (SOC 2, ISO 27001, etc.) account for AI-generated artifacts?

- Have you tested your audit trail by running a mock audit?

If you answered "no" to most of these, you have company. Most engineering organizations are shipping AI-generated code into environments that will face regulatory scrutiny, without the infrastructure to withstand it.

The trajectory is set

The compliance landscape is not settled. The Colorado AG has not filed an enforcement action yet. The EU is still designating competent authorities. NIST is in the concept paper phase. The OWASP taxonomy is brand new. Microsoft's governance toolkit shipped days ago.

But the trajectory is clear. Every regulatory body, standards organization, and platform vendor is arriving at the same place: autonomous AI systems need identity, authorization, specification compliance, and audit trails. The specifics will vary by jurisdiction and framework. The direction is set.

The organizations that navigate this well will not be the ones that scramble after the first enforcement action. They will be the ones that built governance into the development workflow while the requirements were still crystallizing — while there was time to do it right instead of bolting it on after the fact.

The audit is coming. The question is whether you will have answers.

The audit is coming. We're building the tooling that makes compliance declarable and provable, not reconstructed under deadline.