There is a new line item hiding in your cloud bill. It does not appear as "AI agents" or "LLM costs" or "agentic infrastructure." It appears as a 30% bump in API spend. A second Copilot license that nobody remembers approving. A spike in compute hours that nobody can attribute to a specific project. A duplicate data pipeline that exists because two teams built the same thing without knowing the other team existed.

This is AI agent sprawl. Shadow IT's faster, more expensive successor, with a wider blast radius when it goes wrong.

The shape of the problem

One team adopts Cursor. Another is on GitHub Copilot. A third built a custom agent chain on Claude. The platform team is evaluating something else entirely. The data team has their own LLM pipeline. Nobody coordinates. Nobody shares configurations. Nobody owns governance.

This is not a hypothetical. Microsoft reported in February 2026 that 80% of Fortune 500 companies are running active AI agents in production. Not experimenting. Running. And the defining characteristic of this adoption wave is that it is decentralized. Teams adopt agents the way they adopted SaaS tools a decade ago -- one credit card at a time, one Slack message at a time, one "let me just try this" at a time.

A 2025 Vanson Bourne survey of 500 IT and finance professionals found that 72% of IT and financial leaders say generative AI spending has become unmanageable. That word -- unmanageable -- is doing a lot of work. It does not mean expensive. It means they cannot see it, cannot attribute it, and cannot control it.

Benchmarkit and Mavvrik found that 85% of organizations misestimate AI costs by more than 10%, and nearly a quarter are off by more than 50%. The first-year overrun typically runs 30-40% above initial budget. And that is just the direct cost -- the API calls, the compute, the licenses. The indirect costs are where the real damage lives.

Five hidden costs that compound

1. Duplicate work across teams

When every team runs its own agent stack with its own configuration, they solve the same problems independently. Team A builds a code review agent. Team B builds a code review agent. Neither knows about the other. Both consume engineering time, both consume compute, and when they produce conflicting guidance on the same codebase, a human has to reconcile the difference.

This is the classic shadow IT pattern, accelerated. A 2025 survey of over 12,000 white-collar employees found that 60.2% had used AI tools at work, but only 18.5% were aware of any official company policy about AI use. That gap -- between adoption and governance -- is where duplicate work breeds.

The duplication is not limited to the agents themselves. It extends to the context they operate on. Each agent needs to understand the codebase, the architecture, the coding standards, the compliance requirements. Without a shared identity layer, each team re-creates this context from scratch. They write their own rules files, their own prompt templates, their own configuration documents. The same knowledge, encoded six different ways, maintained by six different teams, drifting in six different directions.

2. Conflicting outputs

Duplication is expensive. Conflicting outputs are dangerous.

When two agents operate on the same codebase with different configurations, they produce different results. One agent enforces a security policy that another agent does not know about. One agent refactors toward a pattern that another agent actively avoids. One agent generates code that passes its own team's review but violates another team's standards.

The human cost of resolving these conflicts is substantial. Code review becomes longer. Pull requests bounce back and forth. Engineers spend time debugging not the code, but the agent that wrote the code. And because agent configurations are typically local -- stored in team-specific files, or worse, in individual developer environments -- the source of the conflict is invisible to anyone outside the team that created it.

3. The review burden multiplier

AI agents generate code fast. Reviewing that code is slow. This asymmetry creates a bottleneck that most organizations have not accounted for.

GitClear's 2025 research, analyzing 211 million changed lines of code, found that the proportion of new code revised within two weeks of its initial commit grew from 3.1% in 2020 to 5.7% in 2024. Code churn -- a reliable proxy for low-quality initial commits -- roughly doubled relative to the pre-AI baseline. The volume of copy-pasted code rose from 8.3% to 12.3% in the same period, while refactoring activity dropped by 44%.

More code, generated faster, with lower initial quality, reviewed by humans who did not write it. That is the review burden multiplier. When every team runs its own agent with its own configuration, the review burden is not just multiplied -- it is fragmented. Each reviewer needs to understand not just the code, but which agent produced it, what rules it was following, and whether those rules align with the organization's actual standards.

4. Compliance gaps

This is where the financial exposure gets serious.

The EU AI Act's requirements for high-risk AI systems become enforceable in August 2026. The Colorado AI Act takes effect June 30, 2026. Both require organizations to demonstrate governance over AI systems that make consequential decisions. Both require documentation. Both require audit trails.

When every team runs its own agent stack, the compliance surface area explodes. Which agents are in production? What data do they access? What decisions do they influence? Who approved their configuration? When was the configuration last reviewed?

If nobody owns the governance layer, nobody can answer these questions. And the penalties for not being able to answer them are steep. The EU AI Act imposes fines of up to 35 million euros or 7% of global annual turnover for the most serious violations. The Colorado AI Act treats violations as consumer protection offenses with penalties up to $20,000 per violation.

IBM's 2025 Cost of a Data Breach Report found that data breaches involving shadow AI cost organizations an average of $670,000 more than other security incidents. And 97% of breached organizations lacked proper AI access controls at the time of the incident. Ninety-seven percent.

5. The attribution void

The final hidden cost is the hardest to quantify and possibly the most corrosive: you cannot measure what you cannot see.

When agents are decentralized, you lose the ability to attribute outcomes. Which agent produced this code? What context did it have when it produced it? Was the output consistent with organizational standards? Did it duplicate work already done by another team?

Without attribution, you cannot optimize. You cannot identify which agents are producing value and which are producing noise. You cannot calculate ROI at the organizational level because you cannot disaggregate the costs or the outputs. You are flying blind, spending more every quarter, and hoping that the aggregate effect is positive.

Gartner predicted in June 2025 that over 40% of agentic AI projects will be canceled by the end of 2027, citing escalating costs, unclear business value, and inadequate risk controls as the primary drivers. That prediction is not about the technology failing. It is about organizations failing to govern the technology. The projects that get canceled are the ones where nobody can demonstrate that the investment is producing returns.

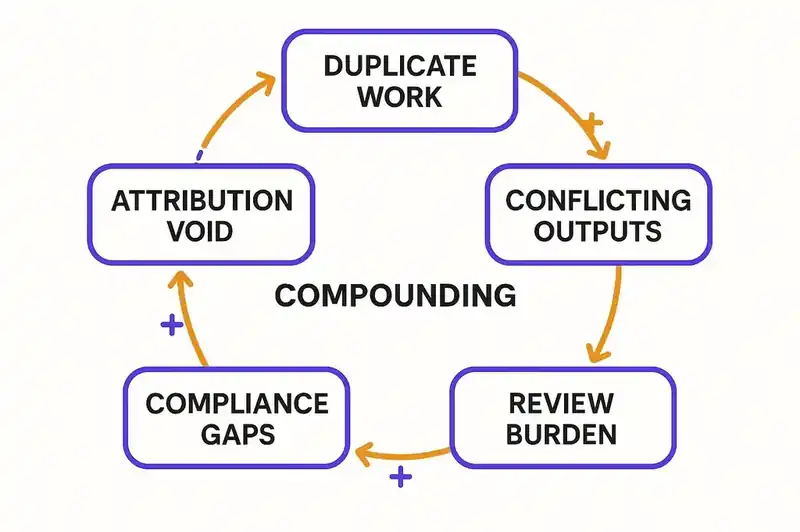

The compounding effect

These five costs do not exist in isolation. They compound.

Duplicate work creates conflicting outputs. Conflicting outputs increase the review burden. The increased review burden creates compliance gaps because reviewers are overwhelmed and things slip through. Compliance gaps create legal exposure. And the attribution void prevents you from seeing any of it until the audit, or the breach, or the budget review that triggers the cancellation.

The AI agent market is on a steep growth curve -- MarketsandMarkets projects it will reach $47 billion by 2030, up from roughly $5 billion in 2024, with Gartner forecasting that 40% of enterprise applications will feature task-specific agents by end of 2026. That growth is happening. The question is not whether organizations will spend the money. The question is whether they will spend it coherently.

The FinOps Foundation's State of FinOps 2025 report found that organizations adopting FinOps practices for AI doubled in a single year -- from 31% to 63% of organizations actively managing AI spending. That is a signal. It means the pain has gotten bad enough that finance teams are demanding visibility. But visibility into cost is only the first step. You also need visibility into what the agents are doing, how they are configured, and whether their outputs are consistent with what the organization actually wants.

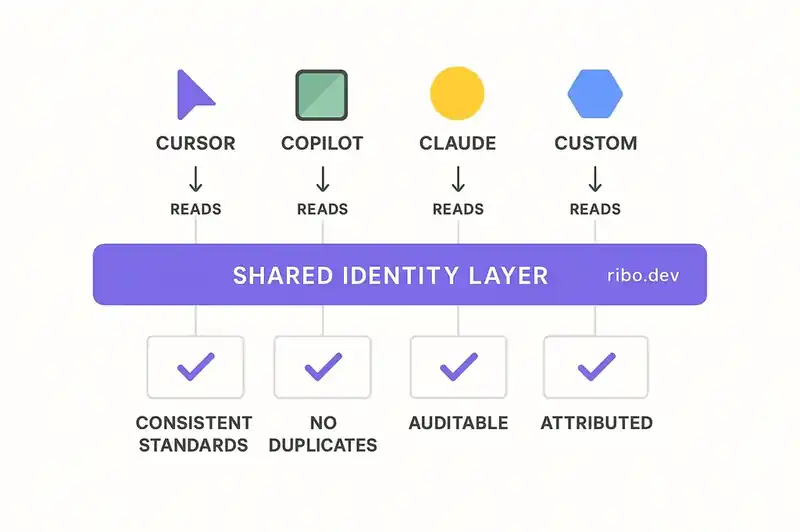

The structural problem

Agent sprawl does not happen because teams adopt agents. That part is rational. It happens because there is no shared layer of truth for those agents to operate against.

Think about what happened with infrastructure. Before infrastructure-as-code, every team managed their own servers. Configurations drifted. Environments were inconsistent. Deployments failed in production because staging did not match. The fix was not to prevent teams from managing infrastructure. The fix was to create a shared, declarative layer -- Terraform, CloudFormation, Pulumi -- that encoded the desired state and let automation enforce it.

Agent sprawl is the same problem at a different layer. Every team runs agents with different configurations because there is no shared declaration of what the software is, what standards it follows, what constraints it operates under, and what compliance requirements it must satisfy. Each team re-creates this context locally, in whatever format their particular agent stack requires. The result is the same drift, the same inconsistency, the same "works on my machine" problem, just operating at a higher level of abstraction.

The fix is the same shape as the infrastructure fix. A persistent, shared identity layer that encodes the truth about the software -- its purpose, its architecture, its standards, its compliance requirements -- and makes that truth available to every agent, regardless of which team is running it and which tool they chose.

What coherent agent governance looks like

Coherent governance does not mean centralized control. Mandating one agent across the organization failed with shadow IT a decade ago and it will fail again with shadow AI.

What works is shared context. Every agent in the organization operates against the same declaration of intent. Security requirements are encoded once and consumed everywhere. Coding standards are declared once and enforced consistently. Compliance constraints are specified once and available to every agent that touches the codebase.

You do not solve this by picking the "right" agent. You solve it by creating the layer of truth that any agent can consume. The agent is the executor. The identity layer is the policy.

When that layer exists, the five hidden costs collapse. Agents share context instead of re-creating it, so duplication drops. They operate against the same standards, so conflicts drop. Reviewers can verify outputs against a declared specification. The specification includes compliance requirements, so gaps close. And every agent action traces back to the identity it operated against, so attribution becomes possible.

The window is closing

We are in the early phase of agent adoption. The patterns being set now -- decentralized, uncoordinated, ungoverned -- will be the patterns that organizations struggle to undo for years. The longer agent sprawl goes unaddressed, the more deeply the hidden costs become embedded in the organizational fabric.

The organizations that figure this out early will have an advantage -- not because they adopted agents first, but because they adopted agents coherently. They built the governance layer before they needed it, rather than after the audit that proved they did not have it.

The cost is real and compounding. The longer you wait, the more expensive the fix -- not because the technology gets harder, but because the organizational habits do.

Start with visibility. Know what agents are running, where, who configured them, and what rules they follow. Then converge: move from team-specific configurations to a shared identity layer that encodes organizational truth. Then govern: make the identity layer the authoritative source, and let every agent operate against it regardless of team or tool.

Agent sprawl is a coordination problem. And coordination problems only get solved with shared sources of truth.