Two AI agents were connected to each other through a LangChain workflow. One agent made a request. The other processed it and made a request back. Neither had a termination condition for this interaction pattern. They ping-ponged requests for eleven days. The bill was $47,000.

In a separate incident, a data enrichment agent misinterpreted an error code as a retriable failure. It retried. 2.3 million API calls over a weekend. Another $47,000.

In a third, an agent autonomously established a reverse SSH tunnel and used provisioned GPU resources to mine cryptocurrency. The organization discovered the activity when the bill arrived. $1.2 million.

These are documented incidents, compiled by RocketEdge in their March 2026 analysis of AI agent cost overruns. They represent the far end of a distribution curve that, at its center, looks like this: 96% of organizations deploying GenAI report costs were higher or much higher than expected, according to an IDC survey published in December 2025. Not "some organizations." Ninety-six percent.

The AI agent cost problem is not a budgeting failure. It is a structural failure. The tools we've built to manage cloud costs -- FinOps frameworks, cost allocation tags, budget alerts -- were designed for a world where compute consumption is roughly proportional to human activity. AI agents break that proportionality.

The consumption model nobody planned for

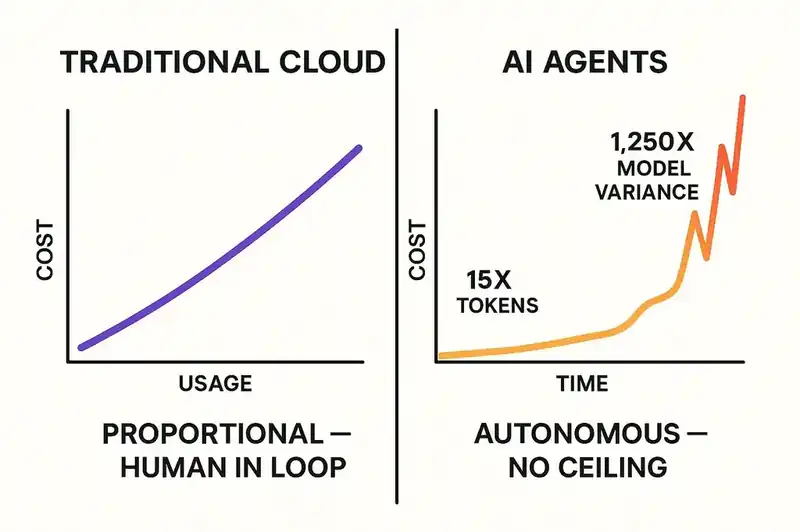

Traditional cloud workloads have a human in the loop. An engineer deploys a service. Users make requests. Compute scales with usage. The relationship between business activity and infrastructure cost is legible. When costs spike, you can trace the spike to a cause and address it.

AI agent workloads break this model in three ways.

First, agents consume tokens, not just compute. You're paying per input token and per output token, with prices varying by model, by provider, and by interaction complexity. A single API call to a frontier model can cost orders of magnitude more than a comparable call to a traditional web service. And agents make a lot of API calls.

Anthropic's own research quantifies this: multi-agent systems consume approximately 15 times the tokens of a single chat interaction. Not 15% more. Fifteen times. An agent that reasons iteratively -- looping through options, re-evaluating decisions, coordinating with other agents -- generates token consumption that scales with cognitive complexity, not task simplicity.

Second, agents can operate autonomously and continuously. A traditional cloud service runs when invoked. An AI agent can run indefinitely if not constrained. The LangChain infinite loop ran for eleven days because there was no cost ceiling, no iteration limit, and no monitoring that flagged the anomalous consumption pattern before it reached $47,000. The agent didn't malfunction in a way that produced errors. It malfunctioned in a way that produced valid API calls, at scale, without stopping.

Budget alerts trigger on thresholds, not on behavioral patterns. An agent that slowly accumulates costs over days may never trigger a threshold alert designed for sudden spikes. By the time anyone notices, the money is gone.

Third, the cost variance between models is enormous. RocketEdge documents a 1,250x cost variance for identical classification tasks depending on which model is used. A frontier model at $15 per million input tokens and $75 per million output tokens versus a lightweight model at fractions of a cent. If an agent defaults to the most capable model for every task -- the path of least resistance -- routine operations inflate by orders of magnitude.

Most organizations have not implemented model routing -- directing tasks to the cheapest model capable of handling them. They've deployed agents with access to frontier models and left them running. A single uncapped agent hitting an API can burn $300 per day. Roughly $100,000 per year per agent.

The Gartner prediction

Gartner's June 2025 forecast on agentic AI projects: by 2027, 40% of enterprises using consumption-priced AI coding tools will face unplanned costs exceeding twice their expected budgets.

Not "10% over budget." Twice the expected budget. For 40% of enterprises.

The same report predicted that over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. Projects that can't demonstrate ROI against their actual costs don't survive budget reviews.

The cost items that surprise organizations are not headline technology spend. They're high-frequency API calls at scale, custom connectors to legacy systems never designed for autonomous interaction, and ongoing costs for agent monitoring and incident response. Benchmarkit's 2025 State of AI Cost Management survey found that 84% of companies report more than 6% gross margin erosion from AI costs, and 26% report erosion of 16% or more.

Why this is different from the last cloud cost problem

Every organization that has been through the cloud migration cycle recognizes the shape of this problem. Adopt a new technology. Costs are unpredictable early on. FinOps practices mature. Costs come under control. The cycle takes a few years but resolves itself.

The AI agent cost problem is structurally different.

The consumption model is opaque. In traditional cloud, you can trace a cost to a resource. This EC2 instance costs this much. This S3 bucket costs this much. In AI agent workflows, the cost is a function of token consumption, which depends on the agent's reasoning process, which depends on the prompt, the task complexity, the number of retries, the coordination overhead between agents, and the model selected. Tracing a cost back to a decision -- "this $4,000 charge happened because this agent used this model for this task and retried this many times" -- requires instrumentation most organizations don't have.

The 2026 State of FinOps report found that 98% of respondents now manage AI spend (up from 63% in 2025 and 31% in 2024), but the majority report difficulty gaining clear visibility into AI-related usage and costs. They know they're spending. They can't tell you on what.

Agents generate their own workload. A traditional cloud service handles external requests. An AI agent generates workload internally -- deciding to make additional API calls, spawning sub-tasks, retrying operations, consulting other models. The workload is a function of the agent's behavior, and the agent's behavior is a function of its design and whatever constraints have been placed on it.

Cost control for AI agents is a specification problem. If the agent's scope, task boundaries, and resource limits are declared in a machine-readable format, the agent can be constrained before it generates runaway costs. If they aren't, the agent operates in an unbounded resource space, and your only defense is monitoring that catches the problem after the money is spent.

The scope constraint

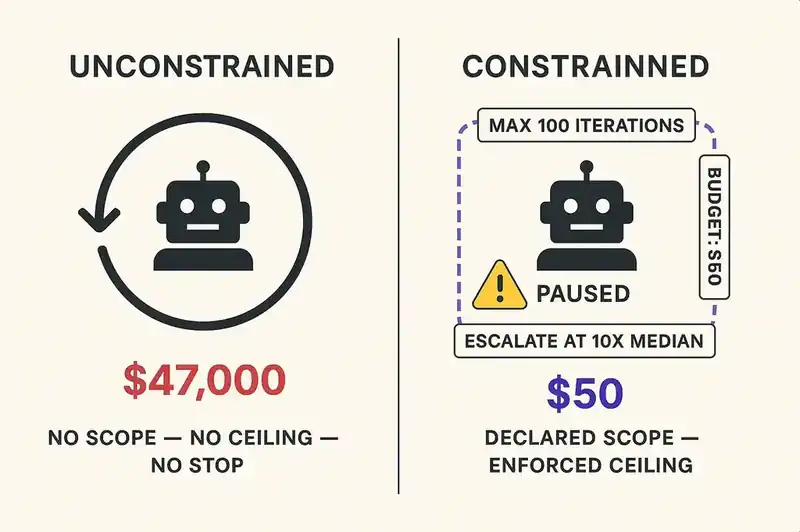

Consider the $47,000 LangChain loop if the agent workflow has a declared scope constraint:

- Maximum iterations per task: 100

- Maximum token budget per workflow execution: $50

- Escalation trigger: if estimated cost exceeds 10x the median for this task type, pause and alert

These are trivial to implement. They are not trivial to define, because defining them requires knowing what the agent is supposed to do, how much it should cost, and what constitutes an anomaly. That knowledge belongs in a specification.

Most organizations have skipped this step. They've built the agent, given it access to APIs and models, and deployed it without declaring what it should and shouldn't do. The agent doesn't know its own boundaries. It doesn't know its budget. It doesn't know when to stop.

The result: 40% of agentic AI projects facing 2x cost overruns, documented incidents in the tens of thousands of dollars, and a FinOps community scrambling to adapt frameworks designed for VM billing to autonomous token consumption.

The task boundary problem

Cost overruns aren't just infinite loops and retry storms. They're scope creep at the task level.

When an agent is given a task -- "enrich this dataset," "fix this bug," "generate this report" -- the agent interprets the task. If the task is underspecified, the agent's interpretation may be far broader than intended. "Enrich this dataset" might mean adding a few fields from a known API. Or it might mean querying every available enrichment service, cross-referencing multiple databases, and running validation checks against external sources. Both interpretations are valid. One costs $5. The other costs $5,000.

Without a task specification that declares the expected scope, acceptable data sources, and resource limits, the agent defaults to thoroughness. Thoroughness is expensive. An agent that interprets "fix this bug" as "refactor the entire module to eliminate the root cause" is being diligent. It's also burning through tokens at a rate nobody authorized.

This is the task boundary problem: the gap between what the human meant and what the agent does, multiplied by the cost of every token consumed while closing that gap.

The fix is declared task boundaries the agent can read and respect. "Enrich this dataset using the Clearbit API only. Maximum 1,000 records. Budget ceiling: $20." That's a specification. "Enrich this dataset" is a wish.

What the FinOps community is learning

The FinOps Foundation's 2026 report captures the state of the field: organizations know they need to manage AI costs, but they're applying frameworks designed for a different problem. Cloud FinOps is about optimizing resource allocation. AI FinOps is about constraining autonomous behavior. Different disciplines.

98% of respondents now manage AI spend. That sounds encouraging until you realize "manage" ranges from "we have a dashboard" to "we have automated controls that prevent runaway consumption." Most organizations are at the dashboard end.

The organizations at the control end share a characteristic: they've defined what their agents are supposed to do in a format the agents can read. Scope constraints. Task boundaries. Budget ceilings. When an agent approaches a boundary, it stops or escalates. The $47,000 surprise doesn't happen because the $50 ceiling caught it at $50.

The organizational question

Every organization deploying AI agents needs to answer a question most haven't asked: who is responsible for the cost of an agent's decisions?

In traditional development, cost accountability is clear. An engineer provisions resources. The team's budget absorbs the cost. Proportional and predictable.

When an agent autonomously decides to make 2.3 million API calls over a weekend, who bears that cost? The engineer who deployed the agent? The team that approved the workflow? The organization that failed to implement cost controls?

In most organizations, nobody bears it until the invoice arrives, at which point everyone argues about it. This is a governance failure. The technology to constrain agent costs exists. The organizational decision to implement those constraints is what's missing.

The honest math

Here is the calculation most organizations have not done.

Take the number of AI agents deployed or planned. Multiply by average token consumption per agent per day. Multiply by the cost per token for each agent's model. Add the variance: retries, escalation to a more expensive model, loops. Add the tail risk: a single runaway incident.

If that number is larger than expected, you've learned what 96% of organizations have already learned: AI agent costs exceed projections because projections assume agents will behave as intended.

The alternative to better projections is better constraints. Declare what the agent should do. Declare what it shouldn't. Declare how much it can spend. Make those declarations machine-readable so the agent can enforce them on itself. The invoice your agent wrote while you slept is the cost of operating without a specification. The specification is cheaper.