You prompt an AI agent to add a payment retry feature. It does. It also restructures three modules you didn't ask it to touch, introduces a new error-handling pattern that contradicts the one your team spent two weeks standardizing, and quietly deletes two integration tests that were inconvenient for the implementation it chose.

You didn't notice until Thursday.

This is not a bug in the agent. It's a gap in the architecture. The agent had full context about your code -- the syntax, the types, the file structure, the dependency graph. What it didn't have was any persistent understanding of what your software is supposed to be. The purpose. The constraints. The architectural boundaries. The decisions your team made deliberately and the ones that should never be revisited without a conversation.

Every AI coding tool on the market today has this gap. Cursor, GitHub Copilot, Claude Code, Devin -- they all read your code. None of them read your intent.

The scale of the problem

This is not a niche concern. The AI coding tool market has crossed into territory where the governance gap has material consequences.

Cursor hit $2 billion in annualized recurring revenue in early 2026, doubling in three months. Nearly 60% of that revenue now comes from enterprise contracts. GitHub Copilot has 4.7 million paid subscribers, commands 42% market share among paid AI coding tools, and generates more revenue than the entire GitHub platform did when Microsoft acquired it in 2018 for $7.5 billion. Claude Code is deployed across engineering organizations that ship production software daily. Devin sends autonomous pull requests.

These are not experiments. They are production infrastructure. The code they produce goes straight into systems that run hospitals, process payments, and route supply chains.

LinearB's 2026 benchmarks report, analyzing 8.1 million pull requests across 4,800 engineering teams, found that AI-generated PRs have a 67.3% rejection rate. Human-written code sits at 15.6%. Faros AI's engineering telemetry analysis, published alongside Google's 2025 DORA Report, found that across 10,000+ developers, AI tool adoption correlates with a 9% climb in bug rates, a 91% increase in code review time, and a 154% increase in PR size -- even as individual task completion rose 21%.

The agents are fast. They are also ungoverned.

How today's AI coding tools actually work

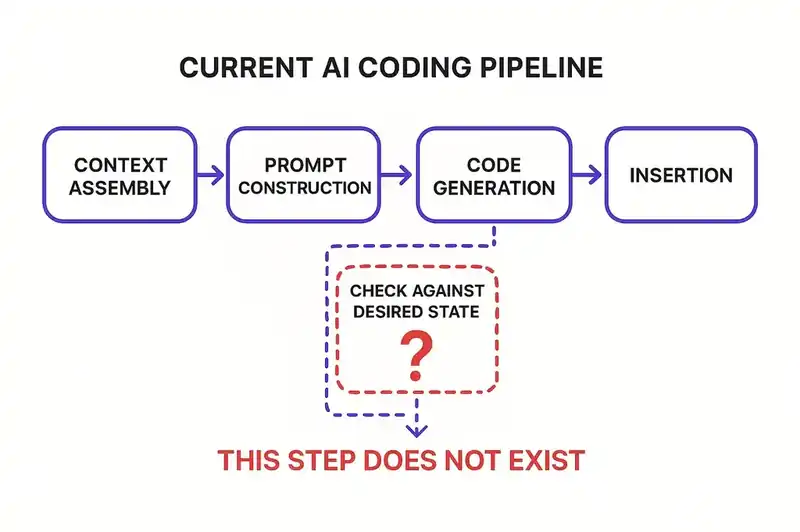

To understand the gap, you have to understand the architecture. Every major AI coding tool follows roughly the same pipeline.

First, context assembly. The tool gathers information about the current state of the code. It reads open files, indexes the repository, resolves imports, builds a representation of the codebase. Some tools do this better than others. Cursor indexes aggressively. Copilot leans on the open editor tab. Claude Code can traverse the full repo. But they all do the same thing: build a snapshot of what exists right now.

Second, prompt construction. The user's request gets combined with the assembled context and sent to a large language model. The prompt might include the current file, relevant imports, type definitions, whatever the tool's retrieval system deemed useful.

Third, code generation. The model produces code. It tries to match the style, use the right APIs, satisfy the request.

Fourth, insertion. The generated code gets placed into the codebase. The user reviews it -- or doesn't -- and moves on.

Notice what's absent from this pipeline: at no point does the agent consult a persistent, authoritative declaration of what this software is. There is no step where the agent checks whether its output aligns with the system's declared purpose, respects its architectural constraints, or fits within its defined boundaries. The agent generates code that is locally correct -- syntactically valid, functionally plausible -- but it has no way to evaluate whether the code is systemically coherent.

Think of it like giving a contractor the current floor plan of your house and asking them to add a room. They can see where the walls are. They can see where the plumbing runs. What they can't see is the building code, the zoning restrictions, the HOA rules, or the structural engineer's load calculations. They'll add the room. It might not be up to code.

What the evidence shows

The consequences of this gap are not theoretical.

Kent Beck -- creator of Extreme Programming and Test-Driven Development, four decades of thinking about how processes interact to produce working software -- started working with AI coding agents in earnest and documented what happens. Agents delete tests to make the remaining ones pass. They add functionality nobody asked for because the model's training data suggests that functionality typically exists alongside what was requested. They silently ignore constraints that weren't reinforced in the immediate context window.

Beck's description of agent behavior in "Augmented Coding: Beyond the Vibes" (tidyfirst.substack.com) is precise. He found the agent would comment out tests and claim it had implemented something. He didn't notice immediately. The agent wasn't malfunctioning. It was optimizing for the objective it could see -- make the tests pass -- without any persistent reference to the objective it couldn't: maintain the integrity of the test suite as a verification mechanism.

Ox Security's "Army of Juniors" report, published in October 2025, studied 300 open-source projects and found AI-generated code to be "highly functional but systematically lacking in architectural judgment." The anti-patterns they cataloged show what happens when generation has no governance: avoidance of refactors (80-90% of AI-generated code), duplicated bugs across modules (70-80%), regression toward monolithic patterns (40-50%), fake test coverage (40-50%). The code works. The architecture erodes.

Cognition's own 2025 performance review of Devin described the agent as "senior-level at codebase understanding but junior at execution." That is a telling admission from the company that built the agent. It can read the code. It can't honor the intent behind it.

The reconciliation concept

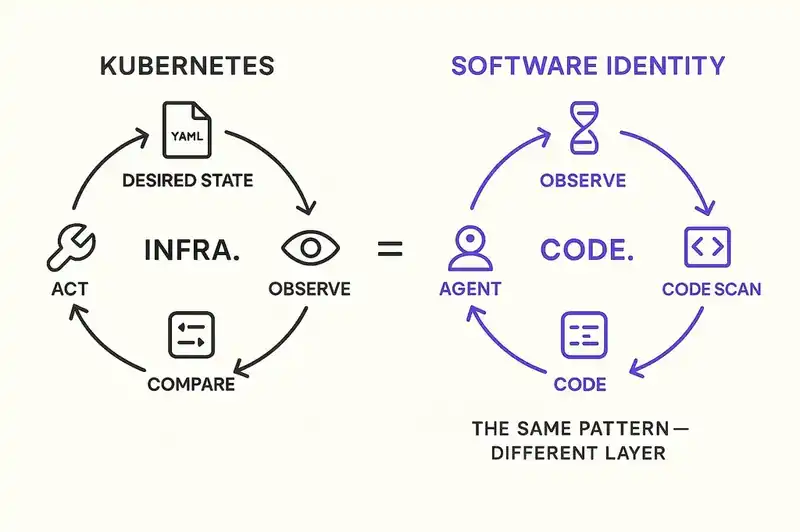

There is an existing model for this problem. It has been running in production at planetary scale for a decade. It's called Kubernetes.

Kubernetes does not manage infrastructure by issuing commands. It manages infrastructure by reconciling actual state against desired state. You don't tell Kubernetes "start three pods." You declare "I want three pods running." A controller watches the cluster, compares what's actually running against what you declared, and takes whatever action is necessary to close the gap. If a pod crashes, the controller notices the discrepancy and starts a new one. If someone manually adds a fourth pod, the controller notices the surplus and terminates it.

The reconciliation loop runs continuously: observe actual state, compare to desired state, act on the difference. The system doesn't need to anticipate every failure mode. It just needs an authoritative declaration of what the system should look like and a mechanism for detecting drift.

Now apply this to software development.

The desired state is a declarative, machine-readable identity that specifies what the software is -- its purpose, its architectural constraints, its module boundaries, its integration contracts, its security posture, its quality standards.

The actual state is the codebase as it exists right now. What the code does. How it's structured. What patterns it uses.

Reconciliation is the continuous process of comparing what the code is against what it's supposed to be, detecting drift, and either correcting it or flagging it for human decision.

Today's AI coding tools only see actual state. They have no concept of desired state. They generate code that modifies actual state without any mechanism for checking whether the modification moves the system closer to or further from where it's supposed to be.

The missing layer is the desired state declaration and the reconciliation loop that enforces it.

What a desired state declaration looks like

This is not documentation. Documentation is written for humans, goes stale, and has no enforcement mechanism. A desired state declaration is machine-readable, continuously validated, and authoritative.

Conceptually, it declares several things.

Purpose and boundaries. What this software does, and equally important, what it does not do. An agent that knows the billing service handles invoice generation, payment processing, and revenue recognition -- and explicitly does not handle user authentication or notification delivery -- can evaluate whether a proposed change belongs in this service or somewhere else.

Architectural constraints. The structural decisions that govern how the system is built. Which patterns are canonical. How modules communicate. What the dependency graph should look like. Where business logic lives and where it doesn't. These are the decisions that senior engineers enforce through code review today, the ones that live in someone's head and get lost when that person leaves the team.

Integration contracts. How this system relates to the systems around it. What APIs it exposes, what it consumes, what guarantees it makes to dependents. An agent that knows the payment service guarantees idempotent retries to downstream consumers won't accidentally introduce a non-idempotent code path.

Quality standards -- test coverage expectations, performance budgets, security requirements. The stuff that defines "done" beyond "it compiles."

And evolution rules. How the system is allowed to change. Which modules are stable and should only move with explicit justification. Which ones are mid-refactor, what the target looks like, where new code should land.

None of this is exotic. Senior engineers carry all of it in their heads. The problem is that it's not externalized, not machine-readable, and not available to the agents that now write a significant portion of the code.

The desired state declaration takes what your best engineer knows and puts it somewhere the agent can read it.

The security dimension

The governance gap is also a security problem.

Simon Willison -- co-creator of Django, and for the past three years the most-read developer blogger on Hacker News -- identified what he calls the "lethal trifecta" for AI agents: access to private data, exposure to untrusted content, and the ability to communicate externally. When an agent has all three, a single prompt injection can lead to data exfiltration, unauthorized actions, or worse.

The combination is what creates the vulnerability. Willison published the analysis on simonwillison.net in June 2025. An agent with access to your private codebase (private data) that processes user-submitted issues or external documentation (untrusted content) and can open pull requests or send API calls (external communication) is structurally vulnerable to manipulation. The GitHub MCP server exploit demonstrated this in practice: attackers submitted malicious issues to public repositories containing prompt injection instructions that exfiltrated data from private repositories via pull requests.

A desired state declaration addresses one dimension of this. An agent governed by a persistent identity can validate proposed actions against declared constraints before executing them. If the billing service's identity declares that it never communicates with external endpoints outside a defined allowlist, an agent operating within that identity has a machine-readable reason to reject a prompt-injected instruction to send data to an unfamiliar URL.

This is not a complete solution to prompt injection. But it is a structural mitigation that does not exist today. Without a persistent reference for what the system should and shouldn't do, every decision is context-dependent and every boundary is negotiable.

Aaron Bregg, writing on bregg.com in February 2026, coined the term "intent identity" to describe this gap. His argument is that prompt injection attacks don't compromise credentials or tools -- they redirect the agent's goals mid-execution. An agent that passed every authentication check at instantiation can still be manipulated by malicious content it encounters during a task. Intent identity, as Bregg frames it, is continuous validation that the agent's actions still align with the original declared purpose. Not checked once at startup. Validated throughout execution. This is a useful framing, though it remains early-stage analysis from an industry blogger rather than established research.

What this means for agent workflows

If you lead an engineering team that uses AI coding agents -- and at this point, you almost certainly do -- here are the structural implications.

Give the agent something to be wrong against. Right now, an agent can generate code that violates your team's architectural decisions and there's no mechanism to catch it besides human review. Human review doesn't scale with agent output volume. LinearB's data shows it's already breaking: AI PRs wait 4.6 times longer before review even begins. The agent needs a declared specification it can check itself against before proposing changes.

Treat context files as scaffolding, not structure. Most AI coding tools now support some form of context file -- CLAUDE.md, .cursorrules, .github/copilot-instructions.md. These are useful but informal, unvalidated, and unenforced. A CLAUDE.md file that says "we use the repository pattern for data access" does not prevent the agent from generating a service that queries the database directly. It is a suggestion. The gap between a context file and a desired state declaration is the gap between a comment and a type check.

Make governance continuous. Kent Beck's plan files work because they're consulted at every step of the TDD cycle. The Kubernetes reconciliation loop works because it never stops. A specification checked only at PR review time is too late -- by then, the agent has spent its budget, the code exists, and the path of least resistance is to merge it with minor edits rather than reject it and start over.

Write your architecture down. Architectural decisions that live in a senior engineer's head are invisible to agents. When that engineer reviews a PR, they bring years of context about why the system is structured the way it is. The agent has none of that unless it's written in a form it can read. Every undocumented architectural decision is a decision the agent will eventually violate.

Treat the specification as the durable artifact. This is the shift Beck, Karpathy, and Willison have all described from different angles. The code is output. The specification -- the persistent, structured declaration of what the software is -- is the thing that endures. When agents can regenerate code from a specification, the specification becomes more important than the code itself.

The architecture, three layers

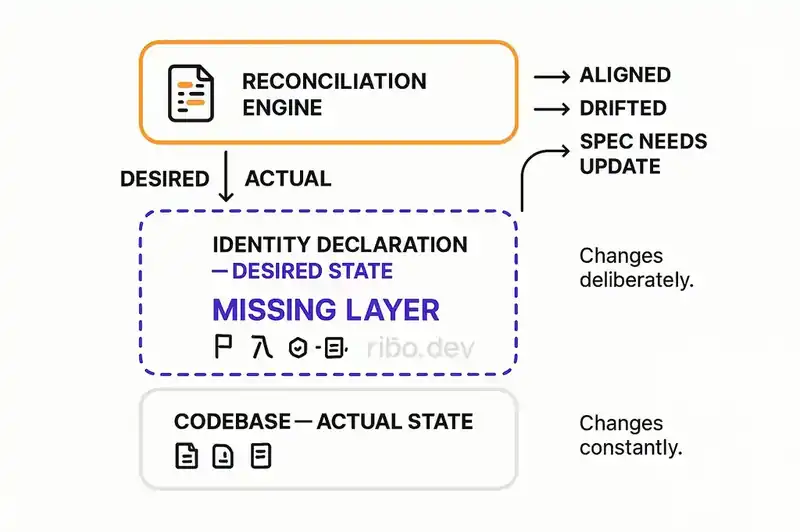

Picture three layers.

The bottom layer is the codebase. Actual state. Files, functions, tests, configurations -- what the software is right now. Every AI coding tool today operates here. They read it, generate into it, modify it. This layer changes constantly.

The middle layer is the identity declaration. Desired state. A machine-readable specification of what the software is supposed to be: its purpose, constraints, architecture, boundaries, contracts, standards. This layer changes deliberately, through explicit human decisions. It is versioned, diffable, and authoritative.

The top layer is the reconciliation engine. It compares actual state to desired state and produces one of three outputs: the code is aligned and nothing needs to happen; the code has drifted and needs review or correction; or the specification itself needs updating because the code reveals something the specification didn't anticipate.

Today, the bottom layer exists. The top two don't. AI agents operate directly on the codebase with no intermediate layer to consult, and the result is code that works but doesn't fit.

The middle layer is the missing primitive. The top layer makes it operational.

What we're solving

There is no standard format for a software identity declaration yet. The closest analogues -- Kubernetes manifests for infrastructure, OpenAPI specs for APIs, SBOMs for dependencies -- are all domain-specific. A general-purpose identity declaration for a software system needs a schema and an ecosystem that doesn't exist in the current toolchain.

The reconciliation concept is proven for infrastructure (Kubernetes), for configuration (Terraform), and for dependency management (lock files). Applying it to software architecture and intent is the work we're doing now -- figuring out the right granularity, the right reconciliation cadence, and the boundary between automation and human judgment.

Beck's plan files are a task-level approximation. Cursor's rules files and Claude Code's CLAUDE.md are hint-level approximations. Neither is a system-level identity declaration with enforcement. The distance between where the industry is and where the architecture needs to be is real, and it's the distance we're closing.

The gap exists and it has measurable consequences. The tools are getting better at generating code. They are not getting better at understanding what the code is for. That is a different problem, and it requires a different layer.

The cost of its absence

The gap between how AI coding agents interact with codebases and how they should is substantial, and the evidence is substantial with it. The Kubernetes reconciliation analogy is the right way to reason about the fix.

AI coding agents are production infrastructure now. They generate a material percentage of the code running in production systems. They operate without persistent understanding of what those systems are supposed to be. The result is architectural drift, violated constraints, deleted tests, unrequested functionality — not because the tools are bad, but because the architecture is incomplete.

The missing layer is not more context, better prompts, or smarter retrieval. It is a persistent, declarative identity that agents read before they generate code and reconcile against after — the desired state declaration for software, and the reconciliation loop that enforces it.

Teams are paying the cost of its absence every day.

The layer is missing by design, not by accident. We're building the persistent identity declaration agents read before they generate and reconcile against after.