Bill Eisenhauer built tic-tac-toe twice. Once in Go, once in Python. Both implementations passed the same 47 invariant tests. The Go version used error returns; the Python version used exceptions. The HTTP frameworks differed. The file structures differed. But the system behaved identically, because both implementations expressed the same specification: the same intent documents, the same OpenAPI contracts, the same executable evaluations.

His conclusion, published in January 2026, is as clean as the demonstration: "The code is different. The identity is identical." Specifications are the durable artifact. Code is a rendering -- generated, validated, and regenerated as needed.

This is a compelling direction. The question we keep coming back to is: what does the infrastructure look like that makes it work beyond a single bounded context?

The intellectual scaffolding

Eisenhauer didn't arrive at this in a vacuum. His framework synthesizes decades of work: Gojko Adzic's Specification by Example, Dan North's behavior-driven development, Eric Evans' ubiquitous language, Bertrand Meyer's design by contract, and Chad Fowler's more recent claim that "evaluations are the real codebase." These aren't fringe ideas. They're established patterns that, until now, lacked an economic forcing function.

That forcing function is here. Code generation is approaching zero marginal cost. The METR study published in July 2025 -- a randomized controlled trial with 16 experienced open-source developers across 246 tasks -- found something counterintuitive: developers were 19% slower with AI tools on codebases they personally maintained. But the study's authors are careful to note this measured experienced developers on familiar code. For greenfield generation, for unfamiliar codebases, for the kind of work agents increasingly do, the economics tilt hard toward generation.

When generation is cheap and human attention is expensive, you invest cognitive effort in specifying what the system is rather than manually constructing how it runs. Eisenhauer's truth layer -- intent documents written in binding English, API contracts as OpenAPI specifications, executable evaluations that define correctness -- is the clearest articulation of this shift we've seen.

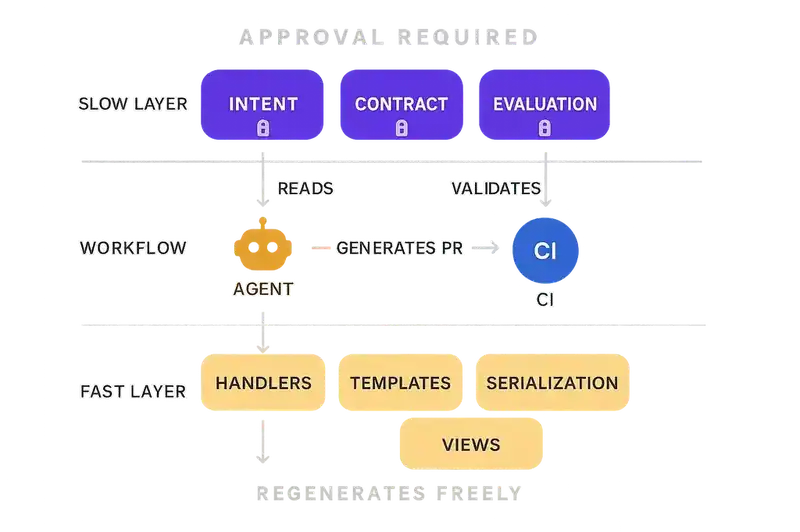

He also borrows Stewart Brand's pace layers concept, originally developed in The Clock of the Long Now (1999) to describe how civilizations change at different speeds. Applied to software: system identity is the slow layer (requiring ceremonial approval for changes), while HTTP handlers, serialization logic, and view templates are the fast layer (regenerated freely). The governance principle -- "never smuggle slow-layer changes inside fast-layer refactoring" -- is sound.

From proof-of-concept to production

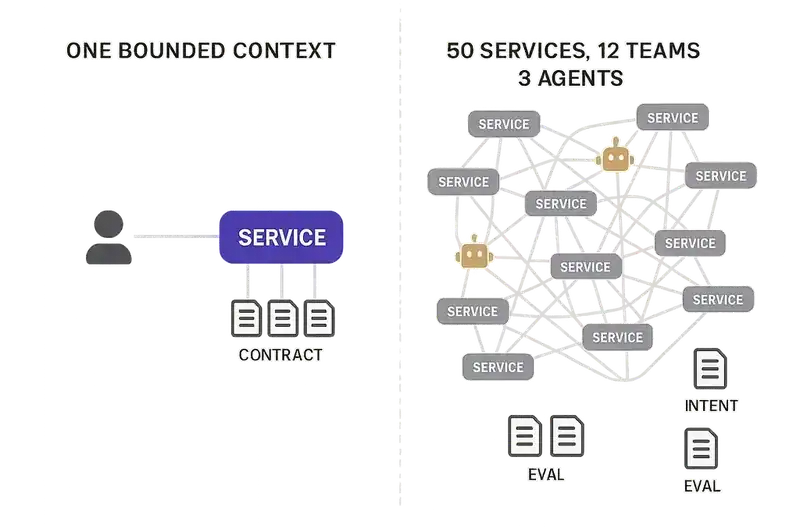

The tic-tac-toe demo works because one person holds the entire system in their head.

One bounded context. One set of invariants. One developer writing both implementations, maintaining both truth documents, running both evaluation suites. The truth layer is enforceable because the enforcer is also the author, the reviewer, and the operator.

One bounded context. One set of invariants. One developer writing both implementations, maintaining both truth documents, running both evaluation suites. The truth layer is enforceable because the enforcer is also the author, the reviewer, and the operator.

Now scale that.

A Series B fintech with 50 microservices, 12 engineering teams, three autonomous coding agents generating pull requests around the clock. The truth layer for a payments service alone might include 200 invariants -- behavioral contracts for transaction limits, idempotency guarantees, regulatory holds, currency conversion rules, audit trail requirements. Multiply that across every service boundary.

Eisenhauer acknowledges the scaling dimension: "Complexity increases the quantity of invariants, not the structure of enforcement." He's right about the structure. And the quantity question is where the infrastructure conversation starts -- where the invariants live, who's allowed to change them, how agents discover them before generating code.

This is where intent documents in a /specs folder become documentation with aspirations. Not because the documents are wrong -- they might be perfectly crafted. But because documents don't enforce themselves. An agent spinning up a new service at 2 AM doesn't browse a folder of markdown files looking for relevant constraints. A team refactoring a shared library doesn't automatically know which downstream contracts their changes violate. A junior engineer modifying a threshold value doesn't realize they're making a slow-layer change inside what looks like a fast-layer commit.

The research backs this up. Piskala's survey of spec-driven development (arxiv 2602.00180, January 2026) found that "empirical studies are nascent" and that keeping specs aligned with implementations "requires discipline and tooling support." Thoughtworks saw the same thing in enterprise SDD adoption: specification rot -- specs drifting from reality as code changes pile up -- was a central concern, right alongside non-deterministic generation and over-formalization. The pattern holds everywhere: specs that aren't enforced become specs that are abandoned.

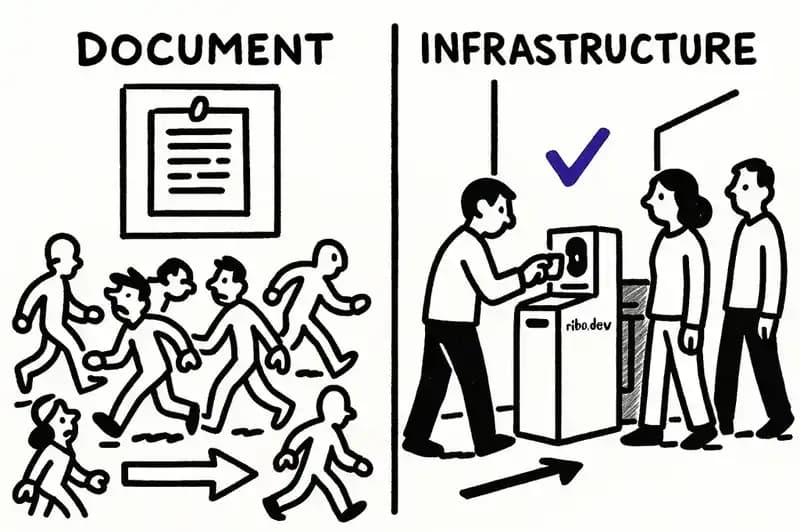

Documents drift. Infrastructure enforces.

If the truth layer is a set of documents, you need a culture of discipline. Everyone must know the documents exist, read them before coding, update them after changes, and catch violations in code review. This works in small teams. It fails predictably at scale, for the same reason that coding standards documented in a wiki fail at scale: the enforcement mechanism is human attention, and human attention is the scarcest resource in the system.

If the truth layer is infrastructure, you need a different architecture. Truth artifacts -- intents, contracts, evaluations -- become machine-readable, version-controlled, queryable objects that live alongside the code but aren't subordinate to it. CI pipelines validate against them. Agents read them before generating. Violations fail the build, not the code review.

This is the difference between "we wrote our contracts down" and "our contracts are enforced on every commit, by every actor, whether human or machine."

Eisenhauer's pace layers concept extends naturally here. The slow layer needs infrastructure that makes changes operationally slow -- different approval paths, audit trails, propagation alerts. The fast layer needs infrastructure that lets agents regenerate implementations freely, as long as evaluations still pass.

Without that infrastructure, pace layers are a mental model. With it, they're a governance system.

What this infrastructure looks like

Software DNA is one implementation of this idea. It stores typed, versioned, machine-readable artifacts that define what a system is, what it must do, what constraints it honors, and how it relates to other systems.

The artifact types map to Eisenhauer's truth layer components. Intent artifacts correspond to his intent documents, but they're structured, queryable, and parseable by agents rather than written in prose that requires human interpretation. (Roman Weis raised exactly this concern on Eisenhauer's original post: natural language specifications carry ambiguity that even lawyers struggle to eliminate. Machine-readable intent artifacts reduce that surface area, though they don't eliminate it.) Contract artifacts extend his API contracts beyond OpenAPI to include event schemas, shared type definitions, and cross-service dependency declarations -- covering the boundaries Eisenhauer acknowledges as challenging. Evaluation artifacts are where the alignment is closest: evaluations define correctness and serve as the slow layer's enforcement mechanism. The difference at scale is that evaluations need to be discoverable across repositories, not just within a single project.

There's also the governance problem that pace layers imply but don't resolve: who is allowed to change a slow-layer artifact? In a system with 12 teams and autonomous agents, that question needs an answer more robust than "requires explicit review." It needs access controls, audit trails, and change propagation that notifies downstream consumers when an upstream contract shifts.

What Monday morning looks like

If you're running a platform team:

Audit your implicit truth layer. It already exists. It's in the Slack threads where engineers explain why a service works the way it does. It's in the PR descriptions that capture constraints nobody wrote down. It's in the tribal knowledge that your most senior engineer carries and that evaporates when they leave. (Avelino et al. studied the bus factor across 133 popular GitHub projects and found that 65% had a bus factor of two or fewer. Inside companies, where code is private and context is held more closely, the concentration is worse.)

Formalize intent as machine-readable artifacts. Start with one service -- the one that causes the most cross-team friction. Write down what it is, not how it's built. What invariants does it honor? What contracts does it expose? What evaluations define "correct"?

Wire validation into CI. An intent artifact that isn't checked on every commit is documentation. An intent artifact that fails the build when violated is infrastructure.

Give agents read access to truth before write access to code. If an autonomous coding agent can generate a pull request, it should first query the relevant intent and contract artifacts to understand the constraints it's operating within. Generation without context is how you get fast code that violates slow-layer contracts.

What's still open

Eisenhauer's work is some of the clearest thinking in this space. The intellectual lineage is rigorous, the proof-of-concept is clean, and the core thesis -- that specifications outlast implementations and should be treated as the primary engineering artifact -- is correct.

The natural next step is making it operational. Making truth layers work at 50-service scale is an infrastructure problem, and the tooling is still maturing. Natural language ambiguity in specifications remains a real limitation -- structured artifacts reduce it, but the tension between human readability and machine parseability isn't fully resolved. Performance constraints don't fit neatly into behavioral specifications. Third-party service boundaries create zones where your truth layer meets someone else's, and that interface is inherently fragile.

These are problems worth solving, not reasons to wait. The cost of not having truth layer infrastructure compounds with every agent you deploy, every service you add, every engineer who leaves and takes context with them. Eisenhauer proposed that code isn't the asset. Now someone has to build the infrastructure that makes the actual asset durable at the scale where it matters.