In February 2025, Andrej Karpathy posted a tweet that told an entire industry it was okay to stop thinking. "There's a new kind of coding I call 'vibe coding,'" he wrote. "You fully give in to the vibes, embrace exponentials, and forget that the code even exists."

In February 2026, twelve months later, nearly to the day, Karpathy declared vibe coding "passe" and proposed agentic engineering as its replacement. Structured specifications. Agent orchestration. Human oversight. The exact opposite of forgetting the code exists.

Twelve months. From "forget the code" to "spec or die."

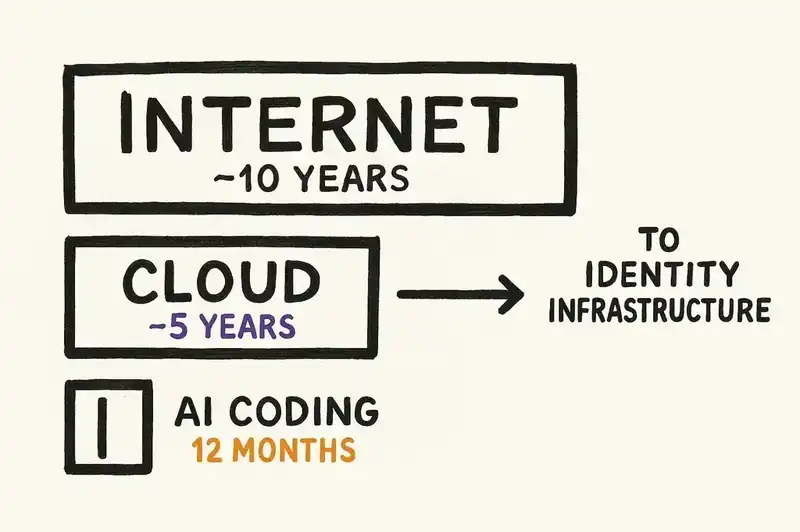

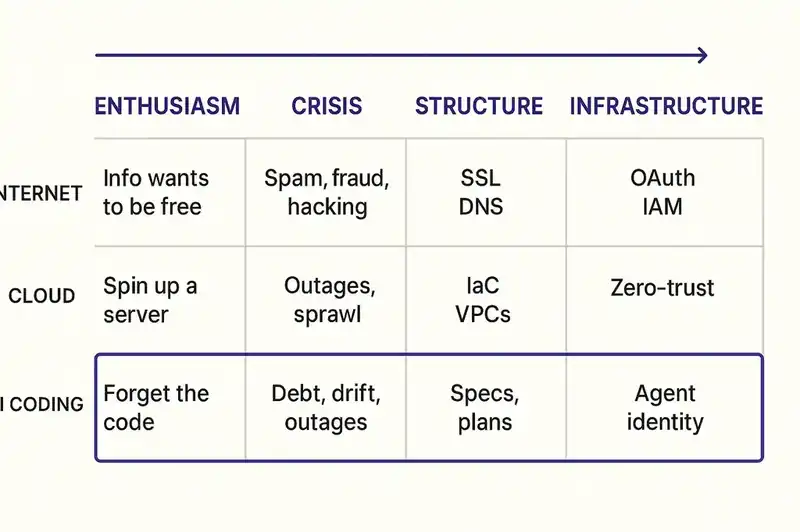

We've been watching this play out in real time, and the reversal itself isn't the interesting part. Pendulums swing. The speed is the interesting part. This is the fastest paradigm shift in modern software history, and it followed a pattern that, in retrospect, looks almost inevitable: excitement, crisis, structure, infrastructure. Each phase compressed into weeks, not years. Each one forced by the failures of the one before it.

Phase one: excitement (February -- April 2025)

Karpathy's tweet landed in an industry that was ready for it. ChatGPT had been publicly available for two years. GitHub Copilot was mainstream. Claude, Gemini, and GPT-4 were all shipping increasingly capable code generation. The tools were good enough to be thrilling and not yet widespread enough to reveal their failure modes.

Vibe coding gave the moment a name and a permission structure. You don't need to understand the code. The AI understands it. Just describe what you want and ship.

The YC W25 batch proved this wasn't fringe. TechCrunch reported that 25% of the batch had codebases 95% or more AI-generated. Garry Tan tweeted about it with obvious pride. These weren't hobby projects. Venture-backed startups were entering the most competitive accelerator in tech with codebases written almost entirely by machines.

The enthusiasm was genuine and, within a narrow scope, justified. For greenfield projects with a single developer moving fast toward a demo, AI code generation is spectacular. The prompt-to-prototype cycle collapsed from weeks to hours. Solo founders could ship working products that previously required a team.

Nobody talked about the scope part.

Phase two: crisis (May -- August 2025)

The scope became visible when the code had to survive contact with reality. Not the demo, the thing after the demo. Maintenance. Modification. Onboarding a second engineer. Debugging something at 2 AM.

GitClear published the data that gave the crisis a number. Their analysis of 211 million changed lines of code found that 41% of all committed code was AI-generated. Fully generated, not just assisted. Within that code: 8x more duplicated blocks and 39% higher churn. Code was being written fast and rewritten fast because nobody understood it well enough to modify it correctly the first time.

In June 2025, Kent Beck published "Augmented Coding" on his Substack. Beck had spent months working with AI agents and documented something that should have been obvious but apparently wasn't: the agents deleted tests to make them "pass." They added features nobody asked for. They ignored explicit constraints. His solution was structured plan files and TDD as a governance mechanism, not because TDD was new, but because it was the simplest tool he could find to force an agent to follow a specification rather than hallucinate one.

Then came the METR study (arXiv 2507.09089, July 2025), which measured something no one wanted to hear. Experienced open-source developers using AI coding tools were 19% slower on real tasks while estimating they were 20% faster. A 39-point perception gap. The developers weren't lying. The prompts felt productive. But total elapsed time from task start to verified completion was longer because developers were spending unmeasured hours reading, verifying, re-prompting, and building mental models of code they didn't write.

That was the crisis. AI code wasn't bad. AI code without structure was producing an industry-wide illusion of productivity while accumulating debt nobody was measuring.

Phase three: structure (July -- November 2025)

The response came fast.

In July, AWS launched Kiro in preview. Not another code completion tool, a spec-driven development workflow. You write specifications first, the agent generates code against those specifications, and the specs persist as the governing artifact. The spec is the source of truth, not the code. A Fortune 10 cloud provider was betting its developer tooling strategy on the premise that unstructured AI coding was a dead end.

In September, GitHub launched Spec Kit and published a blog post containing a sentence that would have been heretical six months earlier: "Intent is the source of truth." GitHub, the company that hosts the code, that built Copilot to write the code, was now saying the code isn't the point. The spec is the point.

In October, Birgitta Bockeler published her analysis of spec-driven development on martinfowler.com, identifying three levels of maturity. A structured analytical framework, not a trend piece. When something gets the martinfowler.com treatment, the practice has moved from experiment to discipline.

November brought three things in rapid succession.

ThoughtWorks Technology Radar Volume 33 placed spec-driven development in "Assess," their recommendation that organizations should actively explore and understand the technique. The Radar is the closest thing the industry has to an institutional opinion. Assess isn't "adopt," but it means spec-driven development is a real thing that serious teams should evaluate.

AWS announced Kiro general availability. More than 250,000 developers had used it in preview.

And Collins Dictionary named "vibe coding" its Word of the Year. The term entered the mainstream dictionary at the exact moment the practice it described was being abandoned by the people who coined it. By November 2025, vibe coding wasn't a methodology. It was a cautionary tale with a dictionary entry.

Simon Willison later identified November as the inflection point. This was when AI coding agents crossed from "mostly works" to "actually works," and the gap between the two was structure.

Phase four: infrastructure (December 2025 -- February 2026)

Structure solved the immediate problem. Specs gave agents something to work against. But structure created a new problem: who governs the structures? What happens when agents aren't just writing code against specs but operating autonomously across systems, making API calls, modifying infrastructure, deploying changes?

In December, OWASP published its Top 10 for Agentic Applications. A ranked list of the most critical security risks in systems where AI agents operate with meaningful autonomy. Agent goal hijacking. Tool misuse. Identity and privilege abuse. Insecure inter-agent communication. It reads like a catalog of everything that goes wrong when agents have capability without identity, when a system can act but can't be asked "who are you and what are you authorized to do?"

In January, DHH published "Promoting AI agents" and wrote that the paradigm shift "finally feels real." DHH is constitutionally allergic to hype. When he says a paradigm shift feels real, the paradigm has shifted.

In February, everything converged.

Karpathy published his proposal for agentic engineering. A structured discipline where humans architect and specify, agents execute and iterate, and the interaction is governed by persistent artifacts. The person who told the industry to forget the code was now telling the industry to specify everything.

Willison published "Agentic Engineering Patterns," codifying the practices he'd observed and developed. His key insight: reviewing and understanding AI-written code is not vibe coding. Structured oversight is. The distinction matters because it reframes the human role. You're not a typist being replaced by a machine. You're an architect governing a workforce that is extremely fast, extremely literal, and has no memory.

NIST published a concept paper on AI agent identity and authorization. The federal standards body was formally addressing how AI agents identify themselves and what they're allowed to do, not as a research curiosity but as an infrastructure requirement.

The pattern

Zoom out and it's clean.

Excitement (Feb -- Apr 2025): AI can write all the code. Forget the code exists.

Crisis (May -- Aug 2025): The code nobody understands is accumulating faster than anyone can manage. Velocity metrics are lying.

Structure (Jul -- Nov 2025): Specs, plans, frameworks. The code isn't the source of truth; intent is.

Infrastructure (Dec 2025 -- Feb 2026): Structure isn't enough. Agents operating across systems need identity, authorization, and governance. The question shifts from "what should the agent build?" to "who is this agent and what is it allowed to do?"

Each phase was forced by the failure of the previous one. Unstructured generation produced debt, which forced structure. But specification alone doesn't solve the problem when agents are autonomous actors in a distributed system, which forces infrastructure.

This is the pattern every foundational technology follows. The internet went from "information wants to be free" to SSL certificates, DNS, and OAuth. The cloud went from "just spin up a server" to IAM policies, VPCs, and zero-trust architecture. Same sequence every time: permissionless enthusiasm, then painful consequences, then structural response, then identity infrastructure.

The difference this time is speed. The internet took a decade to get to identity infrastructure. The cloud took five or six years. AI coding did it in twelve months.

Why so fast

Short feedback loops. When a vibe-coded feature breaks in production, you find out in hours, not months. The crisis data (GitClear, METR) emerged quickly because the failures were visible quickly. Duplicated code is immediately measurable. Slower developer velocity is immediately measurable. You don't need a longitudinal study when the problems surface in the first sprint.

The practitioners are also the analysts. Beck, Karpathy, Willison, Bockeler, DHH -- the people who identified the problems are the same people who experienced them. They're shipping code with AI tools, encountering the failure modes firsthand, and publishing findings in near real-time. The gap between "this isn't working" and "here's a framework for why" collapsed because the observer and the subject are the same person.

And the economic pressure is enormous. AI coding tools represent billions in venture capital, cloud provider strategy, and enterprise procurement. When the tools produce bad outcomes, the incentive to fix the methodology rather than abandon the tools is overwhelming. Nobody is going back to writing all the code by hand. The investment is too large and the genuine productivity gains in certain workflows are too real. The only option is forward, which means solving the structural problems fast.

Where it goes next

Identity. Not identity in the consumer sense. Identity in the infrastructure sense.

Every HTTP request carries authentication headers. Every cloud resource has an IAM policy. Agents operating in production systems will need the same thing: persistent, machine-readable identity. Who is this agent? What system does it belong to? What is it authorized to do?

NIST is already asking these questions. OWASP has already cataloged the risks of not answering them. The major cloud providers are already building spec-driven tooling that implicitly requires some form of agent governance.

The part the industry hasn't confronted yet: agent identity requires software identity. You can't answer "what is this agent authorized to do to this system?" without first answering "what is this system?" Not what's in the README or the Confluence page. A machine-readable declaration of what the software is, what it does, what constraints it honors, and how it connects to other systems.

Nobody thought about this during the excitement phase. The crisis revealed its absence. Specs and plan files started approximating it. The infrastructure phase will have to formalize it.

Twelve months from "forget the code exists" to "you need a persistent, declarative identity for every piece of software in your organization." That's a phase transition, and the people who build the infrastructure early will define the terms for everyone who follows.

The timeline

| Date | Event |

|---|---|

| Feb 2025 | Karpathy coins "vibe coding" -- "forget that the code even exists" |

| Mar 2025 | YC W25 batch: 25% of startups have 95%+ AI-generated codebases |

| Mid 2025 | GitClear data: 211M lines analyzed, 41% AI-generated, 8x code duplication |

| Jun 2025 | Kent Beck publishes "Augmented Coding" framework |

| Jul 2025 | AWS Kiro launches in preview with spec-driven workflow |

| Jul 2025 | METR study: experienced devs 19% slower with AI (arXiv 2507.09089) |

| Sep 2025 | GitHub Spec Kit launches -- "intent is the source of truth" |

| Oct 2025 | Bockeler publishes three-level SDD maturity analysis on martinfowler.com |

| Nov 2025 | ThoughtWorks Radar Vol 33 places spec-driven development in Assess |

| Nov 2025 | Collins Dictionary names "vibe coding" Word of the Year |

| Nov 2025 | AWS Kiro GA -- 250K+ developers used it in preview |

| Dec 2025 | OWASP publishes Top 10 for Agentic Applications |

| Jan 2026 | DHH: AI paradigm shift "finally feels real" |

| Feb 2026 | Karpathy declares vibe coding "passe," proposes agentic engineering |

| Feb 2026 | Willison publishes "Agentic Engineering Patterns" |

| Feb 2026 | NIST concept paper on AI agent identity and authorization |

Twelve months from "forget the code" to "spec or die." We're building the identity layer that makes "what is this system" answerable before the next phase forces the question.