Something unexpected is happening in engineering organizations that have embraced AI coding tools.

The promise was straightforward: AI generates code faster, developers ship more, velocity goes up. The first part came true. The rest did not. The fastest code generators in the history of software development ran straight into the same bottleneck that has existed since the first pull request was opened: someone has to review this.

That someone, increasingly, is your most experienced engineer. And they are drowning.

The math does not work

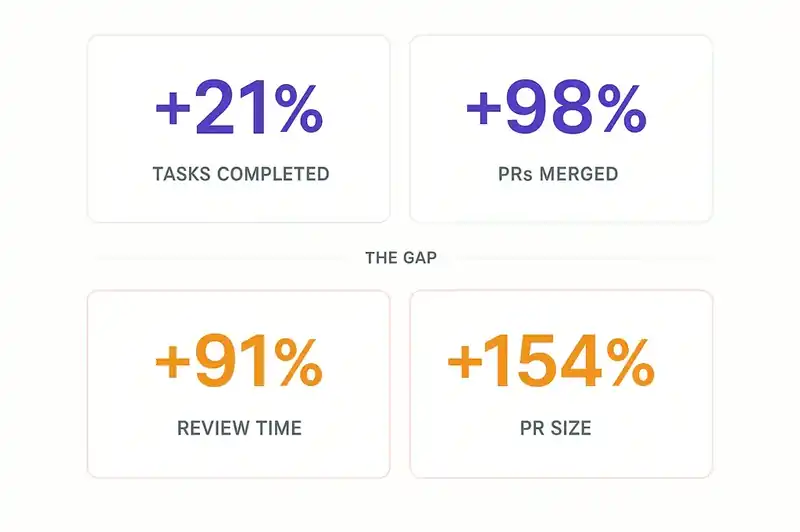

Faros AI published their AI Productivity Paradox report in 2025, based on telemetry data from over 10,000 developers across 1,255 teams. The headline numbers are stark.

Developers on teams with high AI adoption complete 21% more tasks and merge 98% more pull requests. That sounds great until you read the next line: PR review time increases 91%. Average PR size increases 154%.

Think about what that means in practice. Your team is generating nearly twice as many pull requests, each one more than two and a half times larger than before. The volume of code requiring human review has not doubled. It has roughly quadrupled. The number of people qualified to review it has not changed at all.

This is not a productivity gain. It is a load transfer. Work that used to be distributed across the team -- writing, thinking, designing, reviewing -- has been compressed into one phase: review. And review is the one phase that AI cannot meaningfully automate, because it requires understanding of intent, context, and organizational knowledge.

The people who have that understanding are your senior engineers. They know why the billing module retries three times. They remember the incident that caused the 200ms timeout. They can look at a PR and say, "This will break the event pipeline on Tuesdays." And now they are spending their days reviewing AI-generated pull requests instead of doing the architectural work, mentoring, and system design that only they can do.

The DORA data confirms it

The 2025 DORA report found the same pattern from a different angle. Teams with high AI adoption are producing more code, but the review and integration phases are absorbing the gains.

Code generation has accelerated. The downstream processes that ensure quality, security, and coherence have not. The bottleneck has moved. It used to be writing. Now it is verifying.

This is not a tools problem. You cannot fix it by buying a better code review tool. The constraint is the cognitive capacity of the humans doing the review.

Experienced developers are getting slower, not faster

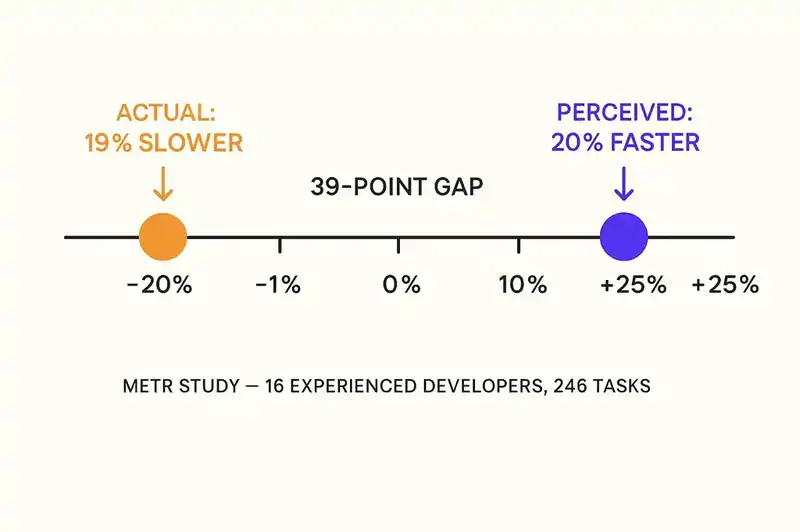

The most counterintuitive finding in recent AI productivity research comes from a METR study published in July 2025. Researchers ran a randomized controlled trial with 16 experienced open-source developers working on their own repositories -- codebases they had an average of five years of experience with.

When AI tools were available, developers took 19% longer to complete tasks. Not faster. Slower.

Developers accepted less than 44% of AI-generated code. The rest of their time went to reviewing, testing, modifying, and ultimately rejecting AI output. Generation was fast, but verification consumed the savings and then some.

The perception gap makes this worse. Before starting, developers predicted AI would make them 24% faster. Afterward, they estimated it had made them 20% faster. The actual measurement: 19% slower. The tools feel productive. The work takes longer.

A Tilburg University study analyzed developer activity in open source projects after GitHub Copilot was introduced. Experienced core developers reviewed 6.5% more code but showed a 19% drop in their own code productivity. Code written after AI adoption required more rework to meet repository standards, pointing to an increase in technical debt.

The researchers' conclusion: "Productivity gains of AI may mask the growing burden of maintenance on a shrinking pool of experts, together with increased technical debt for the projects."

A shrinking pool of experts. AI does not create more senior engineers. It creates more work for the ones you have.

The trust deficit makes everything harder

The 2025 Stack Overflow Developer Survey adds another dimension. 84% of respondents use or plan to use AI tools. But positive sentiment has dropped from over 70% in 2023 and 2024 to 60% in 2025.

More developers actively distrust the accuracy of AI tools (46%) than trust them (33%). Only 3% report "highly trusting" the output. Among experienced developers, the numbers are worse: the lowest "highly trust" rate (2.6%) and the highest "highly distrust" rate (20%).

The biggest frustration, cited by 66% of developers: dealing with "AI solutions that are almost right, but not quite." The second biggest, at 45%: "debugging AI-generated code is more time-consuming."

Almost right but not quite. That is exactly why AI-generated code is harder to review than human-written code. Human-written code that is wrong tends to be obviously wrong -- a logic error, a missing null check, a misunderstood API. AI-generated code that is wrong tends to be subtly wrong -- structurally correct, syntactically clean, passing the obvious tests, but containing assumptions that do not hold in your specific system.

Finding subtle bugs in clean-looking code is cognitively expensive. It is the hardest kind of review work. And we are asking our most experienced people to do more of it, on larger PRs, at higher volumes.

The opportunity cost is staggering

When a senior engineer spends their day reviewing AI-generated pull requests, they are not designing system architecture for the next quarter. They are not mentoring the mid-level engineer who is six months from being able to take over a service. They are not writing the spec that would make the next batch of AI-generated code actually correct. They are not doing the deep debugging that prevents the next production incident.

They are reading AI-generated code and trying to figure out if it is going to break something.

This is the organizational equivalent of using a neurosurgeon to take blood pressure readings. They can do it. It is not what you should be paying them for.

The Tilburg University study quantifies this. Core developers -- the experienced ones, with deep repository knowledge -- saw a 19% drop in their own code productivity after AI adoption. Not because they became worse engineers, but because they were spending their time reviewing and reworking code written by less experienced contributors using AI tools. AI increased the output of junior developers while decreasing the output of senior ones. That is not a productivity gain. That is a redistribution from high-value work to low-value work.

Why the current review model is breaking

Traditional code review was designed for a world where code was written by humans. That world had properties that made review tractable.

The author could explain their reasoning. "Why did you implement it this way?" was a question you could ask, and the answer made the review faster and more accurate.

The volume was bounded by human typing speed and thinking speed. No individual contributor could generate more code than a human could reasonably review.

The reviewer and the author shared a mental model of the system. Both had context about the architecture, the constraints, the recent incidents. Review was a conversation between two people who understood the system, not an interrogation of code written by an entity that understands nothing.

All of those properties are gone. AI cannot explain its reasoning. AI generates code at a volume that overwhelms human review capacity. AI does not share your team's mental model of the system.

Every AI-generated PR requires the reviewer to do more cognitive work per line of code, on more lines of code, with less help from the author.

The spec changes everything

There is a way to make this work. It does not involve hiring more reviewers, training AI to review AI, or accepting lower review standards. It involves changing what review means.

Without a spec, the reviewer has to answer an open-ended question: "What does this code do, and is that the right thing?" That requires reconstructing the author's intent, evaluating design decisions, checking implementation details, and assessing architectural fit. It is expensive because it is unbounded.

When code is generated against a specification, the question changes: "Does this code match the spec?" The reviewer is not reconstructing intent -- the intent is in the spec. The reviewer is not evaluating design decisions -- those are in the spec too. The reviewer is checking conformance: a closed, bounded, verifiable task.

Think of it as the difference between grading an essay and grading a multiple-choice test. Both require expertise. One requires dramatically less cognitive load per item.

Werner Vogels made this point at re:Invent 2025 when he demonstrated spec-driven development as the solution to verification debt. The ACM's analysis in Communications of the ACM describes the same approach: formal specifications that code can be verified against, transforming review from comprehension to conformance checking.

The Faros AI data shows why this matters at scale. When PR review time increases 91% and PR size increases 154%, you cannot solve the problem by asking reviewers to work harder. You solve it by giving them something to review against -- a spec that defines correct behavior, making the review question tractable.

The pipeline problem

There is a second-order effect here. If your senior engineers are spending their time reviewing AI-generated code, they are not mentoring junior and mid-level engineers. The pipeline that creates the next generation of senior engineers -- the people who will eventually share the review load -- is being starved of time with experienced practitioners.

The Tilburg University study found that productivity increases from AI adoption were primarily driven by less-experienced peripheral developers. The experienced core developers bore the cost. You are burning senior engineers' time and attention to make your junior engineers look more productive, while simultaneously reducing the mentorship that would make them genuinely more capable.

In two years, you will have the same number of senior engineers (or fewer, because some burned out and left) and a cohort of mid-level engineers who never got the mentorship they needed. The review bottleneck will be worse, because the pool of qualified reviewers will have shrunk.

What has to change

The answer is not to stop using AI coding tools. The productivity gains at the generation layer are real. The answer is to restructure the workflow so that review can handle the load.

That means three things.

Specifications before generation. Code generated against a spec can be reviewed against a spec. The review question changes from open-ended comprehension to bounded conformance -- cheaper, faster, more reliable.

Automated conformance checking. If you have a spec, you can automate significant portions of the review. Does the code implement the specified interfaces? Does it respect the constraints? Does it handle the edge cases? Tooling can answer these questions, freeing human reviewers for the judgment calls that require human context.

Protecting senior engineer time. If your best engineers are spending more than 30% of their time on code review, something is structurally wrong. That time needs to be defended as aggressively as any other scarce resource. A senior engineer too busy reviewing to mentor, design, or think costs the organization far more than a PR that waits an extra day.

The AI productivity revolution is real. But its chokepoint is your best people. Either you restructure the workflow to protect them, or you watch them burn out, one pull request at a time.