Nobody wrote the bug. The code does exactly what the spec said to do. The spec just never said to handle what happened.

This is the most common failure pattern in software and the one with the weakest defenses. A service processes payments correctly, but nobody specified what happens when two payments for the same order arrive within the same millisecond. An agent reviews pull requests accurately, but nobody declared what it should do when two PRs modify the same identity declaration in opposite directions. The implementation is faithful. The specification had a hole in it.

Capers Jones's research on defect origins puts a number on this: roughly 20% of all software defects trace back to requirements and another 25% to design. That is 45% of bugs born before anyone opens an editor. Chris Newcombe and his colleagues at AWS confirmed the pattern in their 2015 paper on formal methods: "Some of the more subtle, dangerous bugs turn out to be errors in design; the code faithfully implements the intended design, but the design fails to correctly handle a particular 'rare' scenario."

You cannot catch these with better tests. Every test you write inherits the same blind spots as the spec it validates.

The testing blind spot

Think about how testing works. You have a spec. You write code that implements the spec. You write tests that check whether the code matches the spec. If the code passes the tests, everyone agrees the system works.

But what checked the spec?

Unit tests check functions. Integration tests check connections. E2E tests check flows. Chaos engineering checks resilience. Every one of these validates behavior against expectations somebody already has. They all share one assumption: that the expectations are correct and complete.

Nobody pentests the expectations.

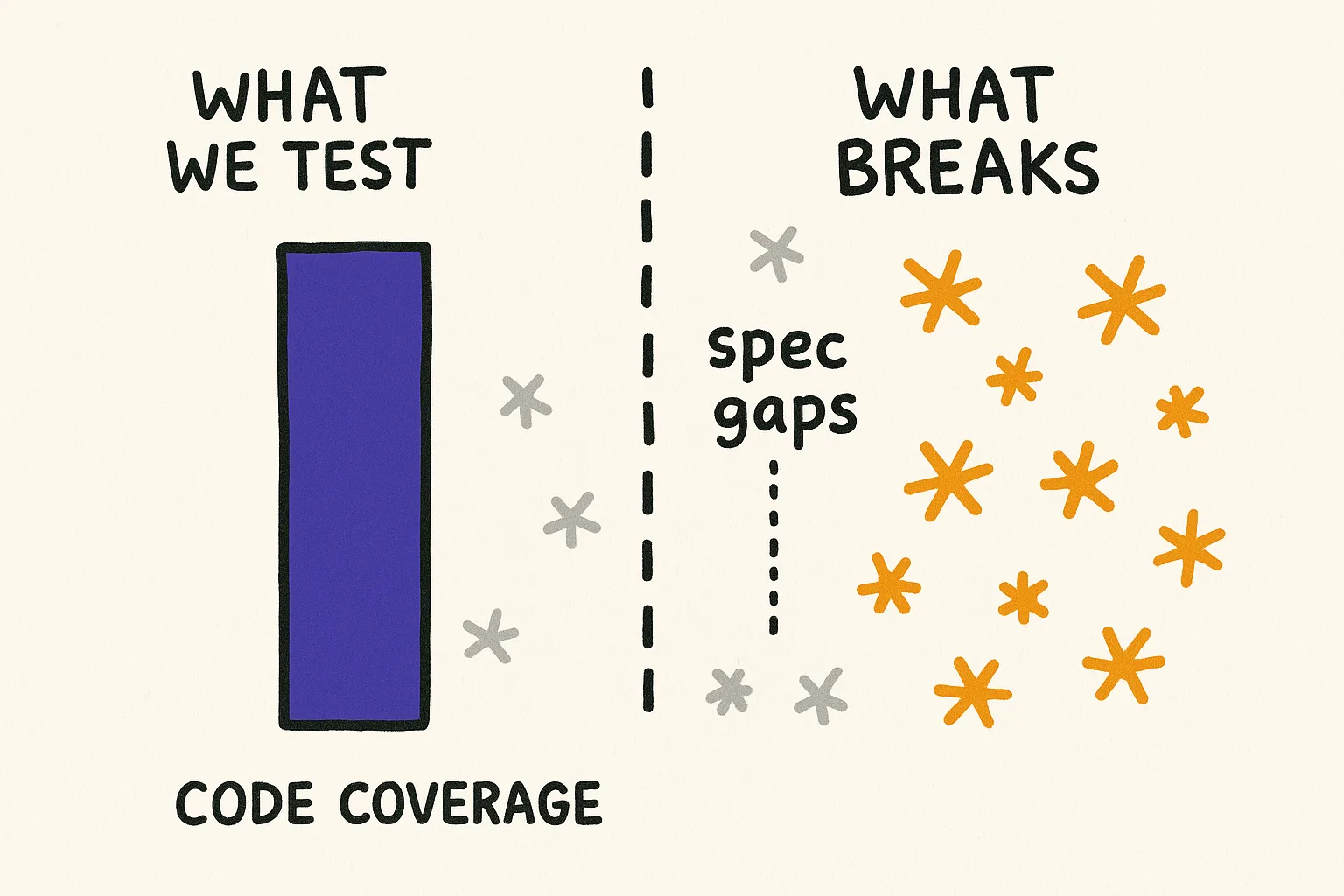

This is why you can have 90% code coverage and still get surprised in production. Coverage measures how much of your code is tested. It says nothing about how much of reality your spec accounts for. A system can be fully covered and fully blind at the same time, as long as the blind spot lives in the spec rather than the code.

The 2024 DORA report found that a 25% increase in AI-assisted coding correlated with a 7.2% decrease in delivery stability. More code, generated faster, with the same spec underneath. The assembly line sped up but the blueprint stayed the same, blind spots included.

You already have a spec. Is it testable?

Most teams have something that functions as an identity declaration for their services, even if they do not call it that. A README. A CLAUDE.md. A design doc. An architecture decision record. Some combination of documents that says what this service is, what it does, what it does not do, and what guarantees it makes.

The question is whether any of that is specific enough to test against.

"We maintain high reliability" is not testable. An agent reading that declaration cannot measure its distance from "high reliability." It cannot check whether a change moves the system closer or further from the target. The statement exists, but it provides no signal.

"All API responses return within 200ms at P99, no request is silently dropped, every state transition is logged to the audit trail within 5 seconds" is testable. Each clause can be verified independently. An agent can check its own output against each one. A CI pipeline can enforce them. A quarterly review can ask whether they still hold.

The difference is not length or detail. It is shape. A testable declaration has shape: specific enough that you can measure your distance from it. A vague declaration is a direction without a destination. You can walk toward "high reliability" forever and never know if you arrived.

This is the upstream move that identity pentesting makes. Instead of testing whether the code does what the spec says, you test whether the spec says enough. Whether it covers the scenarios that matter. Whether its claims are consistent with each other. Whether its boundaries are real or just aspirational.

Five questions to ask your spec

You do not need a framework or a new tool to start. You need five questions and an honest hour.

Pick a service. Pull up whatever documentation defines it. Then ask:

Is it complete? For every claim the spec makes, can you verify it in the implementation? If the spec says "maintains a complete audit trail," does the audit trail actually capture every state transition? Or just the ones the original author thought of? Gaps here are the most common source of bugs nobody wrote. The spec said X, the code does X, but X was not everything that needed to happen.

Is it consistent? Read every declaration in your system side by side. Do they contradict each other? A payment service that guarantees P99 under 200ms cannot depend synchronously on a fraud detection service that guarantees P95 under 500ms. Both declarations are individually reasonable. Together they are a lie. If your specs are individually coherent but collectively contradictory, you have a conflict that will only surface under load in production.

Are the boundaries real? Specs love to say what a service does not do. "This service does not handle user authentication." Fine. Can it reach the auth database? Does it have any code paths that touch auth logic? A boundary that exists only in the documentation is not a boundary. It is a suggestion.

Could something fake pass the checks? If an external dependency implements the same interface as a trusted internal service, does anything distinguish them? Could a compromised package slot into your system because it matches the right API surface? Your spec defines what belongs inside the trust boundary. If the definition is purely structural (right interface, right contract shape), then anything that mimics the structure gets in.

Is it still current? A spec written eighteen months ago describes a system that existed eighteen months ago. Requirements change. Business rules shift. Compliance obligations get updated. If nobody reviews the spec, your team is building toward a target that no longer reflects what the system needs to be. Every change that passed review against the old spec is a change that was validated against outdated identity.

Where this matters most with agents

This gets worse with agents.

A human developer working on a payment service has ambient context. They heard in standup that the compliance team just updated the data residency requirements. They remember from last sprint's retro that the audit trail has gaps. They learned the hard way that the fraud detection service is slow. None of this is in the spec. All of it shapes their decisions.

An agent has none of that. An agent has the declaration and whatever context you give it. If the declaration is incomplete, the agent will produce code that is perfectly consistent with the declaration and perfectly wrong for the real requirements. It will not fill in the gaps from experience because it has no experience to draw from. It will not ask clarifying questions unless the declaration is specific enough to make the gaps visible.

This is why the DORA stability finding matters for agentic teams. AI-assisted coding does not produce worse code. It produces code faster, against the same incomplete spec. The acceleration makes the spec gaps more expensive, not less, because you hit them at higher velocity.

Identity pentesting is the practice of finding those gaps before agents do. Testing the spec, not the code. Probing the declaration for blind spots, contradictions, and boundaries that are not enforced. It is upstream work that prevents downstream failures no amount of behavioral testing will catch.

The biological version of this is wild

The immune system runs a version of identity pentesting that eliminates 95-98% of its own T-cells before they ever enter the bloodstream. Not because they are defective. Because they failed an identity check. The organ responsible, the thymus, builds miniature replicas of every tissue type in the body just to test whether each T-cell knows the difference between self and threat.

When the identity checks fail, the results include autoimmune disease (friendly fire), cancer evasion (threats going undetected), and molecular mimicry (pathogens that forge credentials to bypass the checks). Every failure mode has a direct software parallel.

If you want the full biology, with the research papers, the 32-fold risk multiplier, and an organism that figured out credential spoofing before humans invented passwords, read The Organ That Kills 97% of Its Own Output.

Monday morning

Pull up the spec for one service. The one that breaks most often, or the one your agents touch most. Spend an hour running it through the five questions above.

You will almost certainly find at least one gap where the spec does not cover a scenario that matters, one contradiction between declarations that share a boundary, or one boundary that exists in documentation but not in infrastructure. Each of those is a bug nobody wrote, waiting to be discovered in production.

Finding it upstream costs you an hour. Finding it downstream costs you an incident.

The spec is the first thing that needs to be testable. We are building tooling that makes identity declarations verifiable, not just aspirational.